Computer Vision

ACTL3143 & ACTL5111 Deep Learning for Actuaries

Images

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

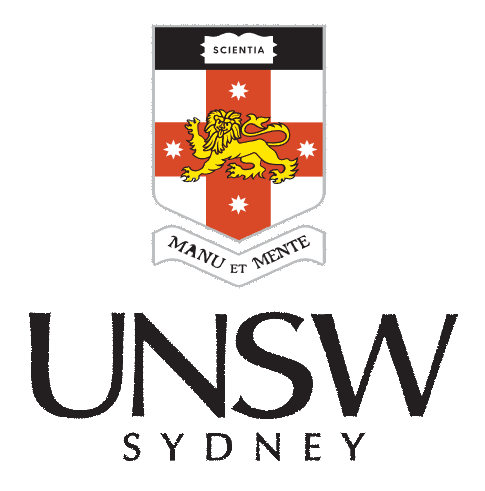

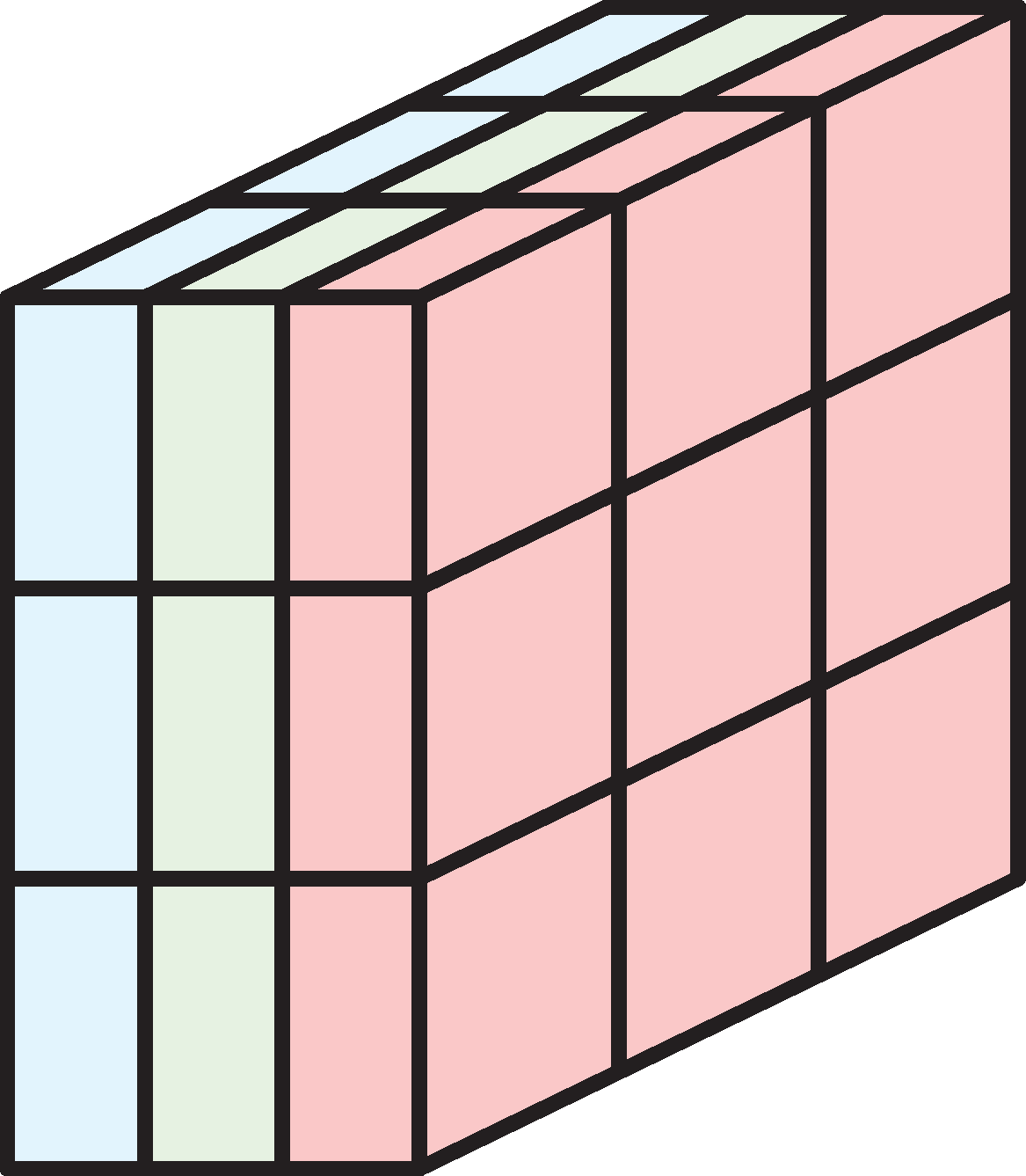

Shapes of data

Illustration of tensors of different rank.

Source: Paras Patidar (2019), Tensors — Representation of Data In Neural Networks, Medium article.

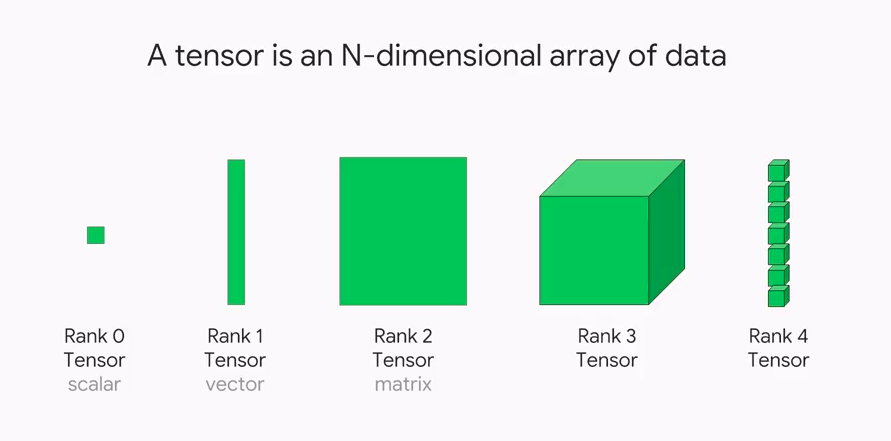

Shapes of photos

A photo is a rank 3 tensor.

Source: Kim et al (2021), Data Hiding Method for Color AMBTC Compressed Images Using Color Difference, Applied Sciences.

How the computer sees them

'Shapes are: (16, 16, 3), (16, 16, 3), (16, 16, 3), (16, 16, 3).'array([[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]]], dtype=uint8)array([[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]]], dtype=uint8)array([[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[255, 255, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]]], dtype=uint8)array([[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0]],

[[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 51, 0, 255],

[ 51, 0, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[ 51, 0, 255],

[ 51, 0, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 51, 0, 255],

[ 51, 0, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[ 51, 0, 255],

[ 51, 0, 255],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 255, 255],

[255, 255, 255],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177]],

[[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0]],

[[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 163, 177],

[255, 163, 177],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0]],

[[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[255, 163, 177],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]]], dtype=uint8)How we see them

Why is 255 special?

Each pixel’s colour intensity is stored in one byte.

One byte is 8 bits, so in binary that is 00000000 to 11111111.

The largest unsigned number this can be is 2^8-1 = 255.

If you had signed numbers, this would go from -128 to 127.

Alternatively, hexidecimal numbers are used. E.g. 10100001 is split into 1010 0001, and 1010=A, 0001=1, so combined it is 0xA1.

Image editing with kernels

Take a look at https://setosa.io/ev/image-kernels/.

An example of an image kernel in action.

Source: Stanford’s deep learning tutorial via Stack Exchange.

Convolutional Layers

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

‘Convolution’ not ‘complicated’

Say X_1, X_2 \sim f_X are i.i.d., and we look at S = X_1 + X_2.

The density for S is then

f_S(s) = \int_{x_1=-\infty}^{\infty} f_X(x_1) \, f_X(s-x_1) \,\mathrm{d}s .

This is the convolution operation, f_S = f_X \star f_X.

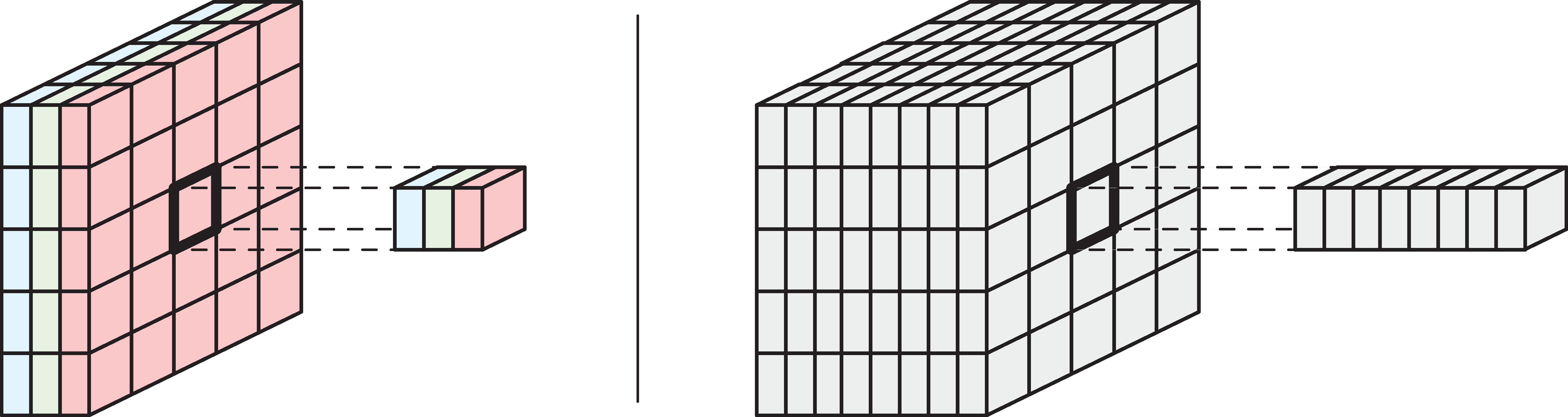

Images are rank 3 tensors

Height, width, and number of channels.

Examples of rank 3 tensors.

Grayscale image has 1 channel. RGB image has 3 channels.

Example: Yellow = Red + Green.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

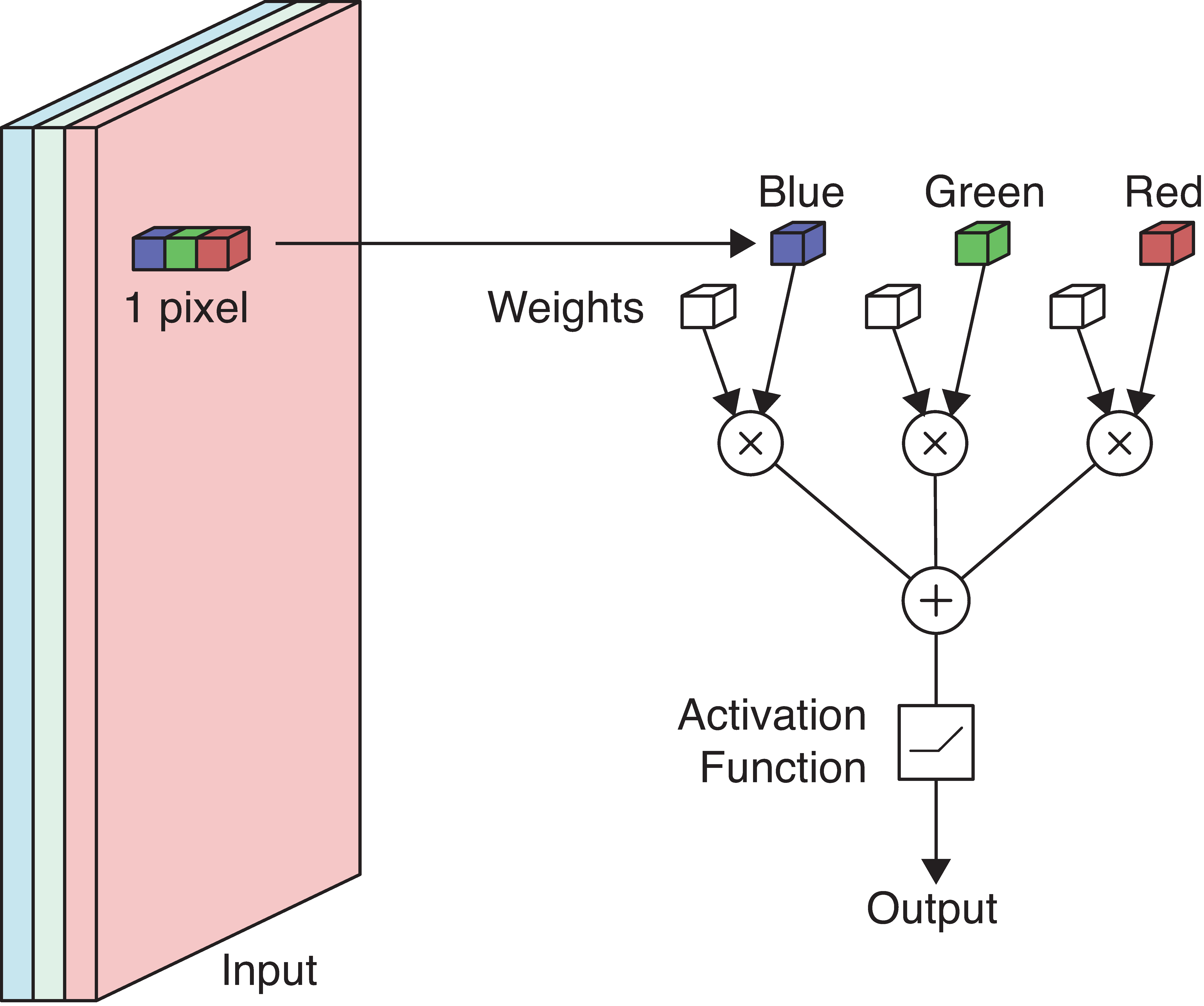

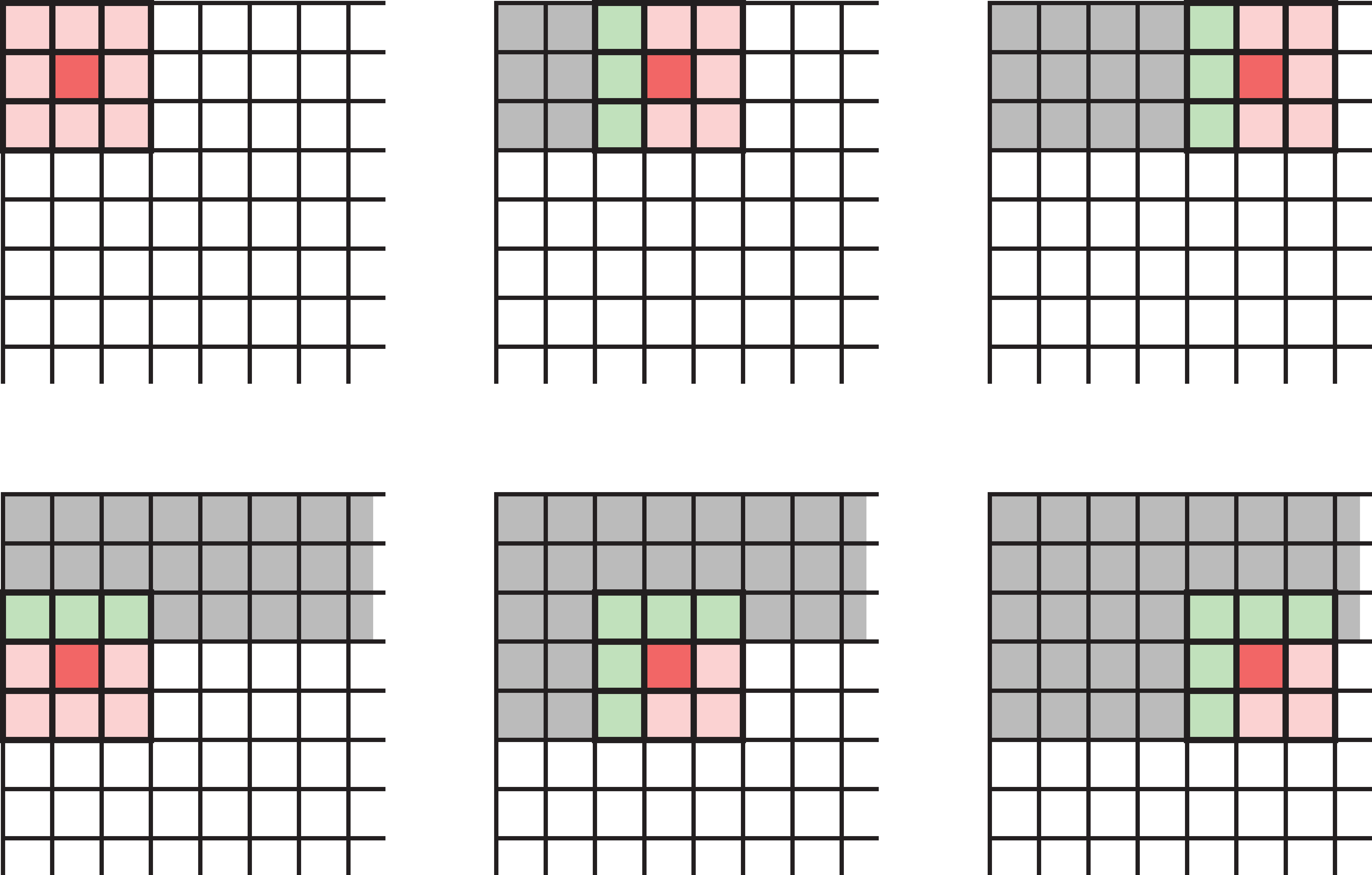

Example: Detecting yellow

Apply a neuron to each pixel in the image.

If red/green \nearrow or blue \searrow then yellowness \nearrow.

Set RGB weights to 1, 1, -1.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

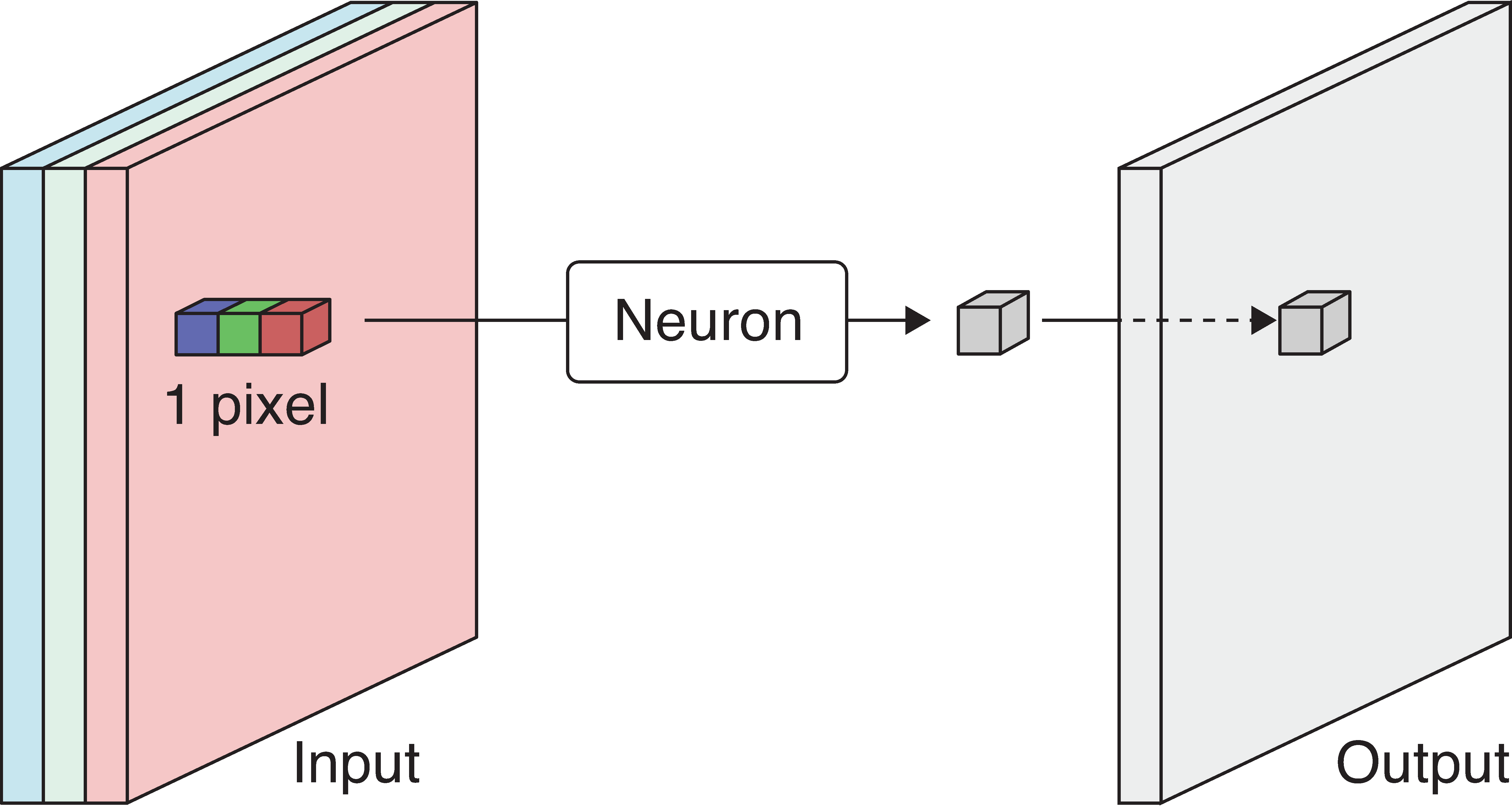

Example: Detecting yellow II

Scan the 3-channel input (colour image) with the neuron to produce a 1-channel output (grayscale image).

The output is produced by sweeping the neuron over the input. This is called convolution.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Example: Detecting yellow III

The more yellow the pixel in the colour image (left), the more white it is in the grayscale image.

The neuron or its weights is called a filter. We convolve the image with a filter, i.e. a convolutional filter.

Terminology

- The same neuron is used to sweep over the image, so we can store the weights in some shared memory and process the pixels in parallel. We say that the neurons are weight sharing.

- In the previous example, the neuron only takes one pixel as input. Usually a larger filter containing a block of weights is used to process not only a pixel but also its neighboring pixels all at once.

- The weights are called the filter kernels.

- The cluster of pixels that forms the input of a filter is called its footprint.

Spatial filter

Example 3x3 filter

When a filter’s footprint is > 1 pixel, it is a spatial filter.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

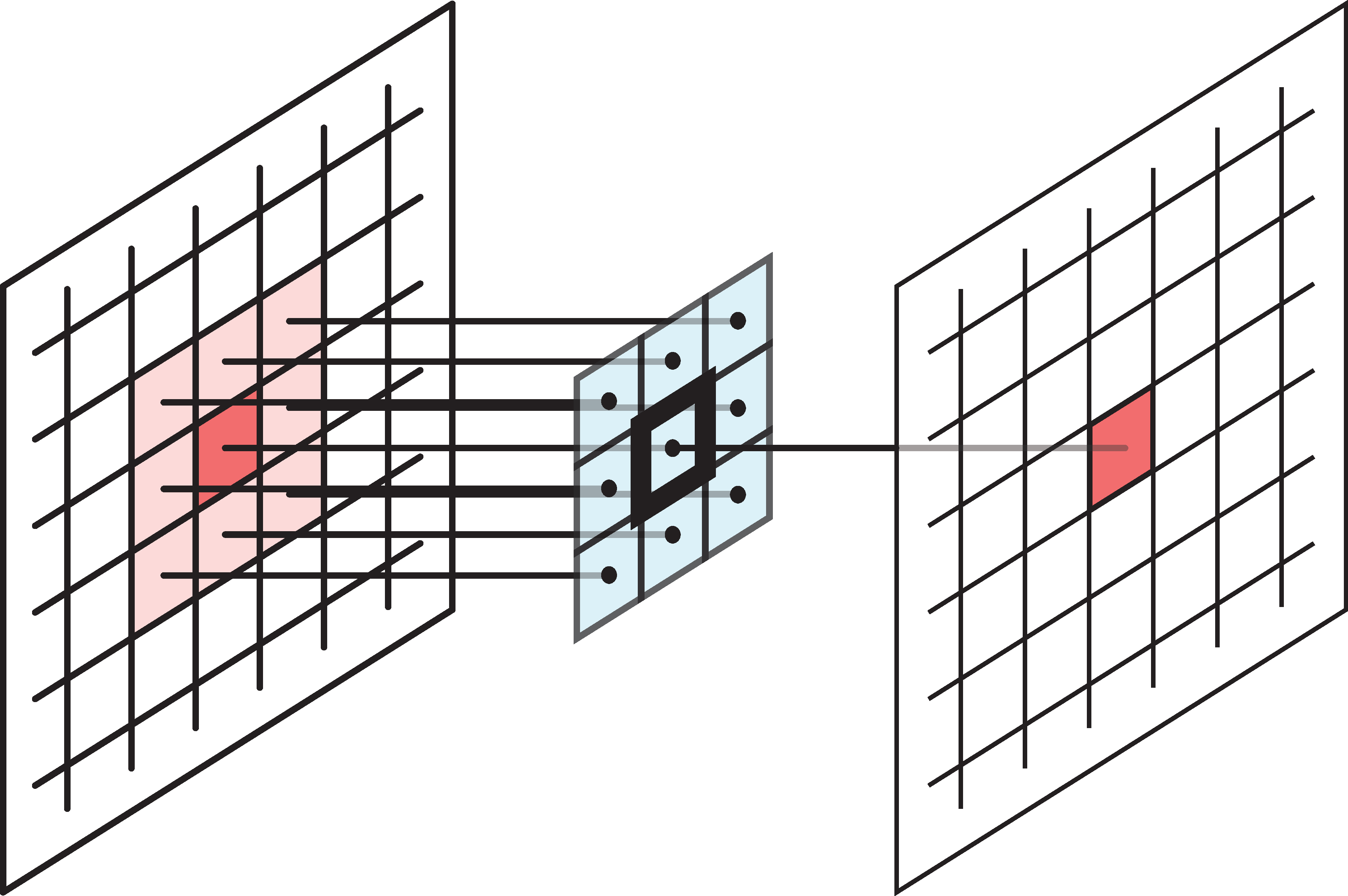

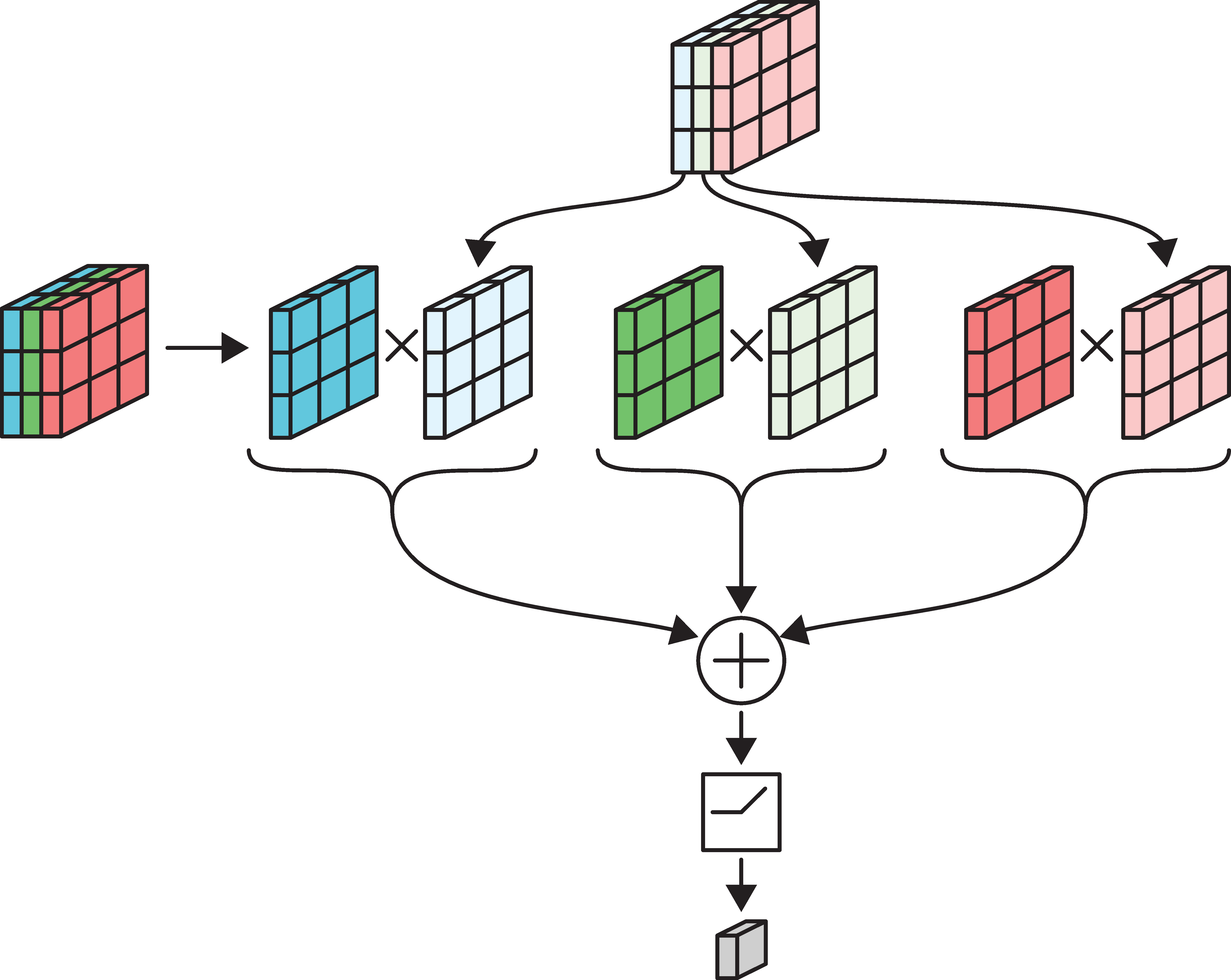

Multidimensional convolution

Need \# \text{ Channels in Input} = \# \text{ Channels in Filter}.

Example: a 3x3 filter with 3 channels, containing 27 weights.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Example: 3x3 filter over RGB input

Each channel is multipled separately & then added together.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

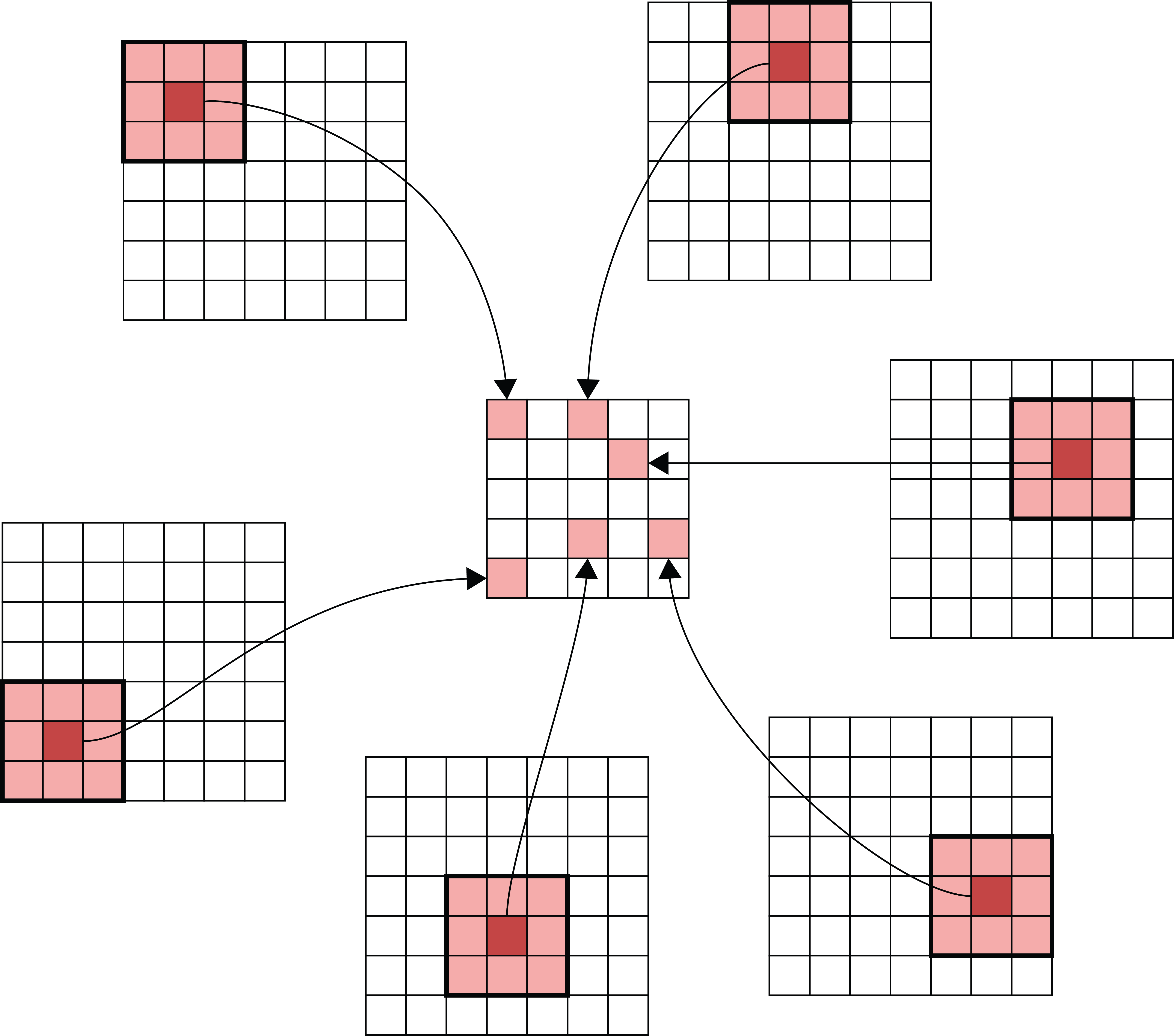

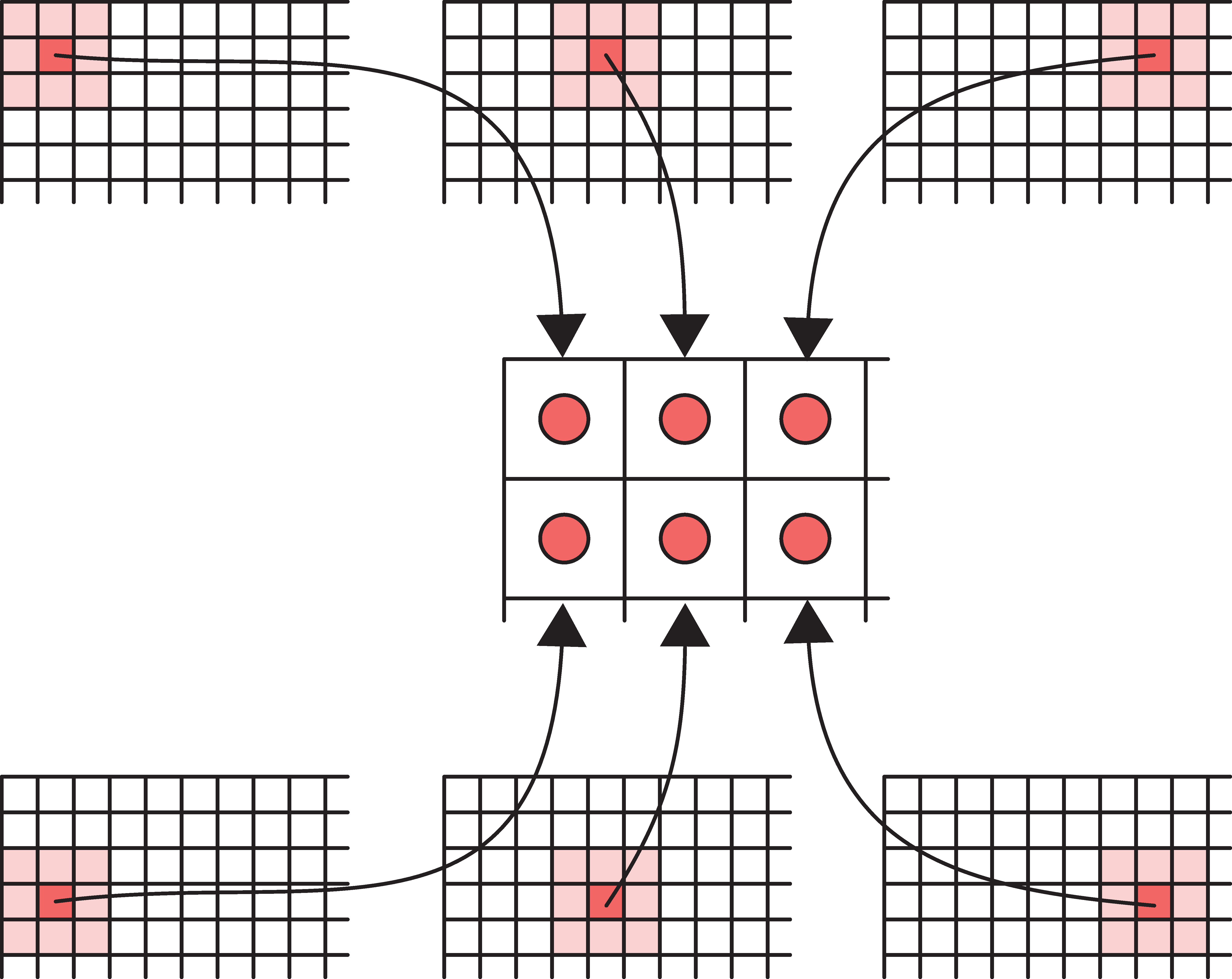

Input-output relationship

Matching the original image footprints against the output location.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Convolutional Layer Options

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

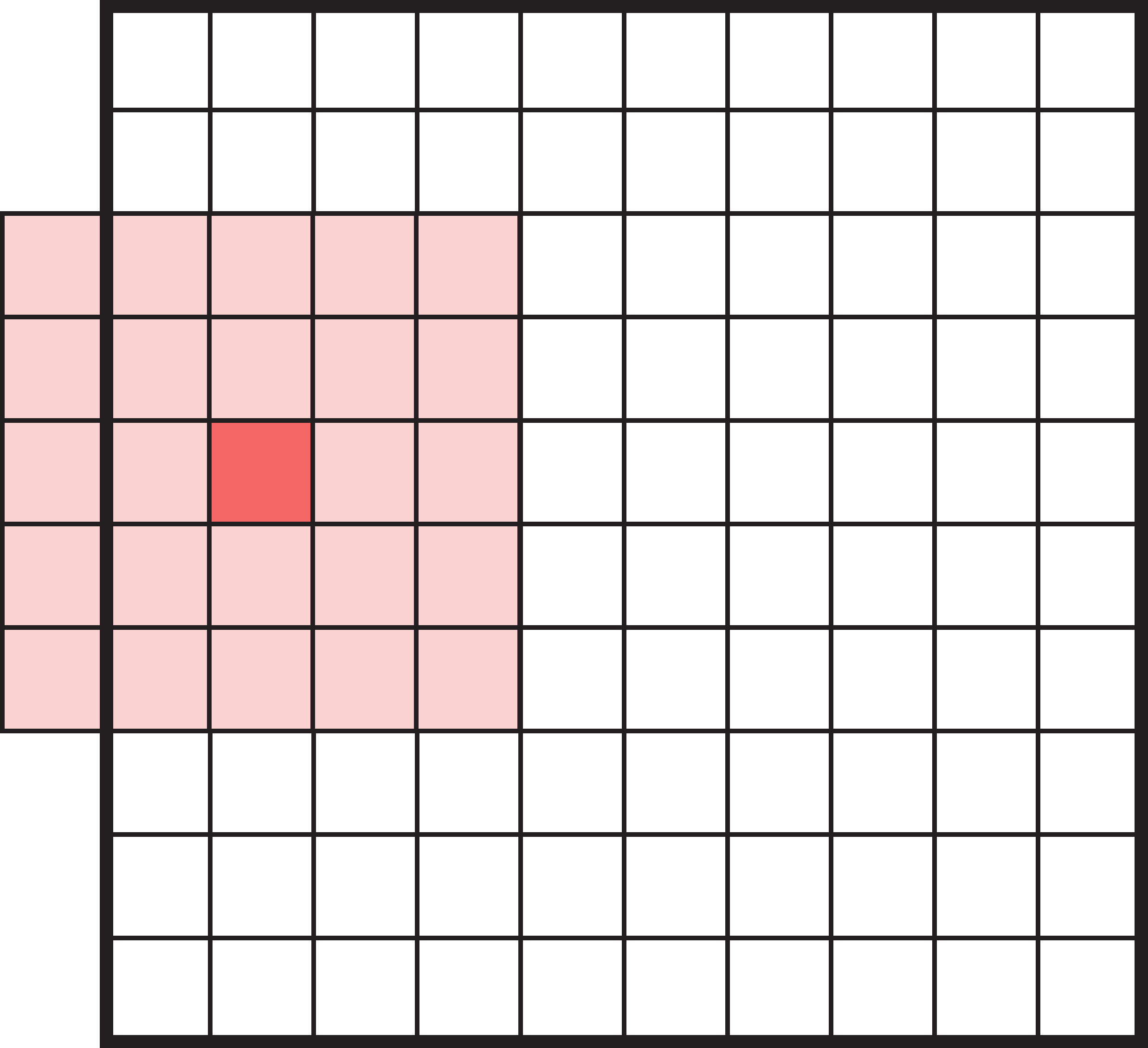

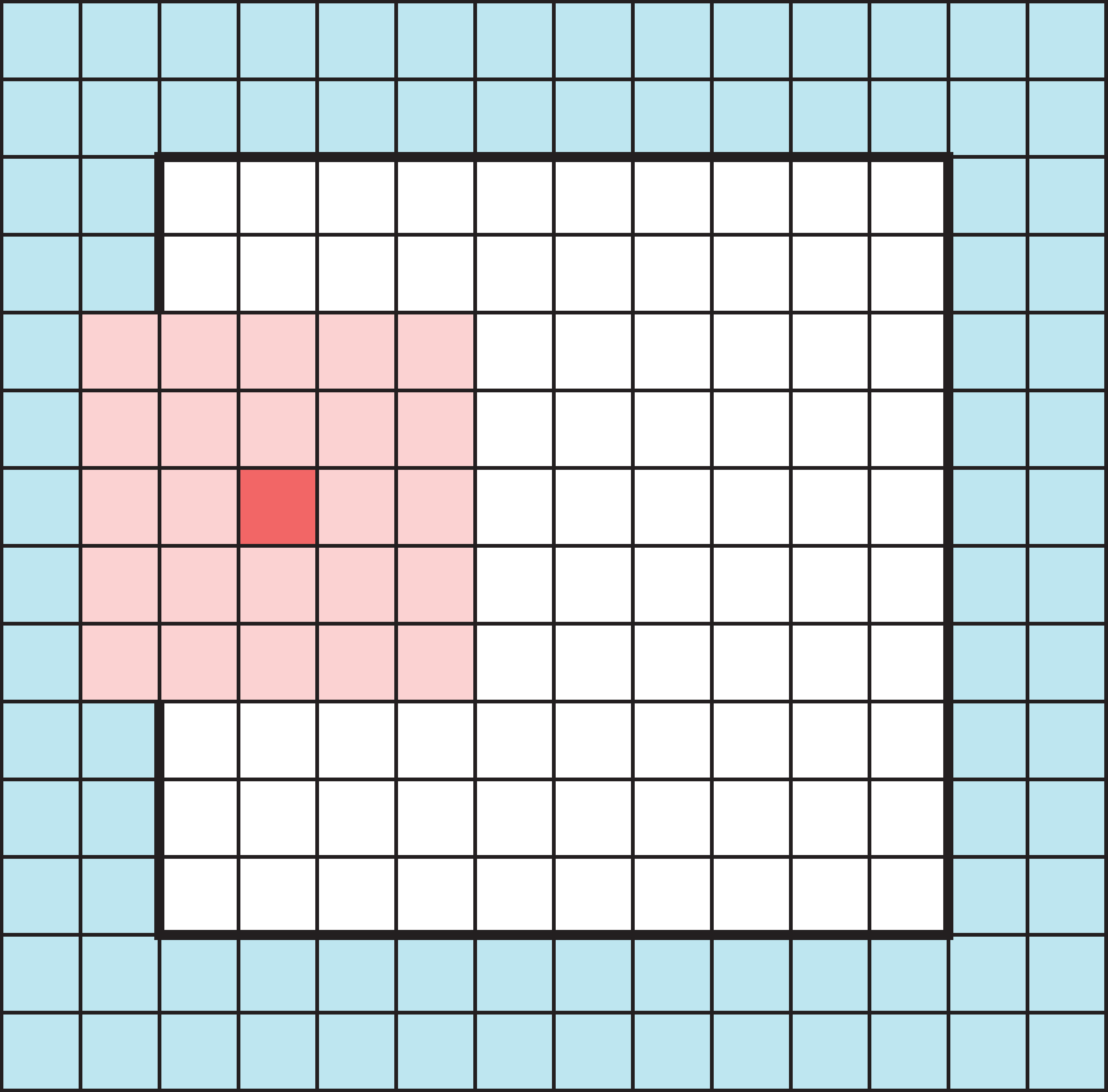

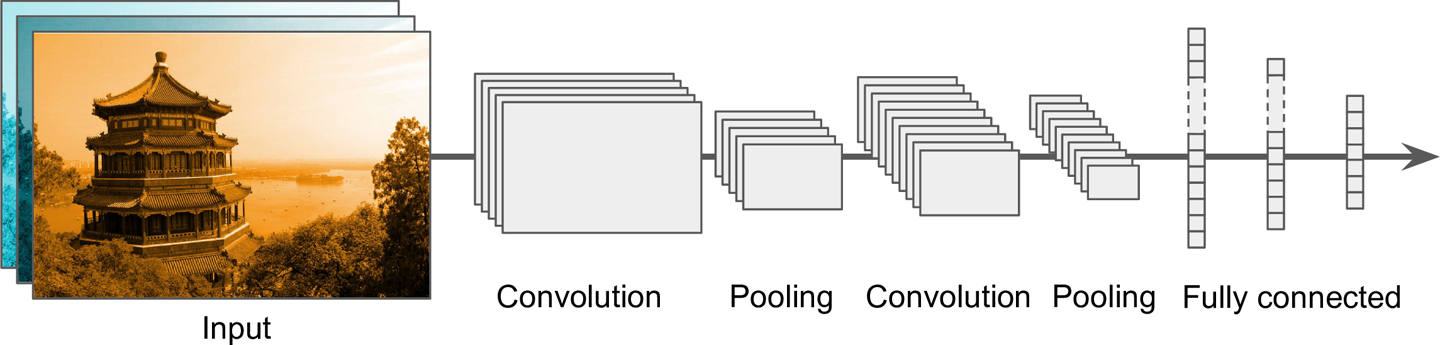

Padding

What happens when filters go off the edge of the input?

- How to avoid the filter’s receptive field falling off the side of the input.

- If we only scan the filter over places of the input where the filter can fit perfectly, it will lead to loss of information, especially after many filters.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Padding

Add a border of extra elements around the input, called padding. Normally we place zeros in all the new elements, called zero padding.

Padded values can be added to the outside of the input.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

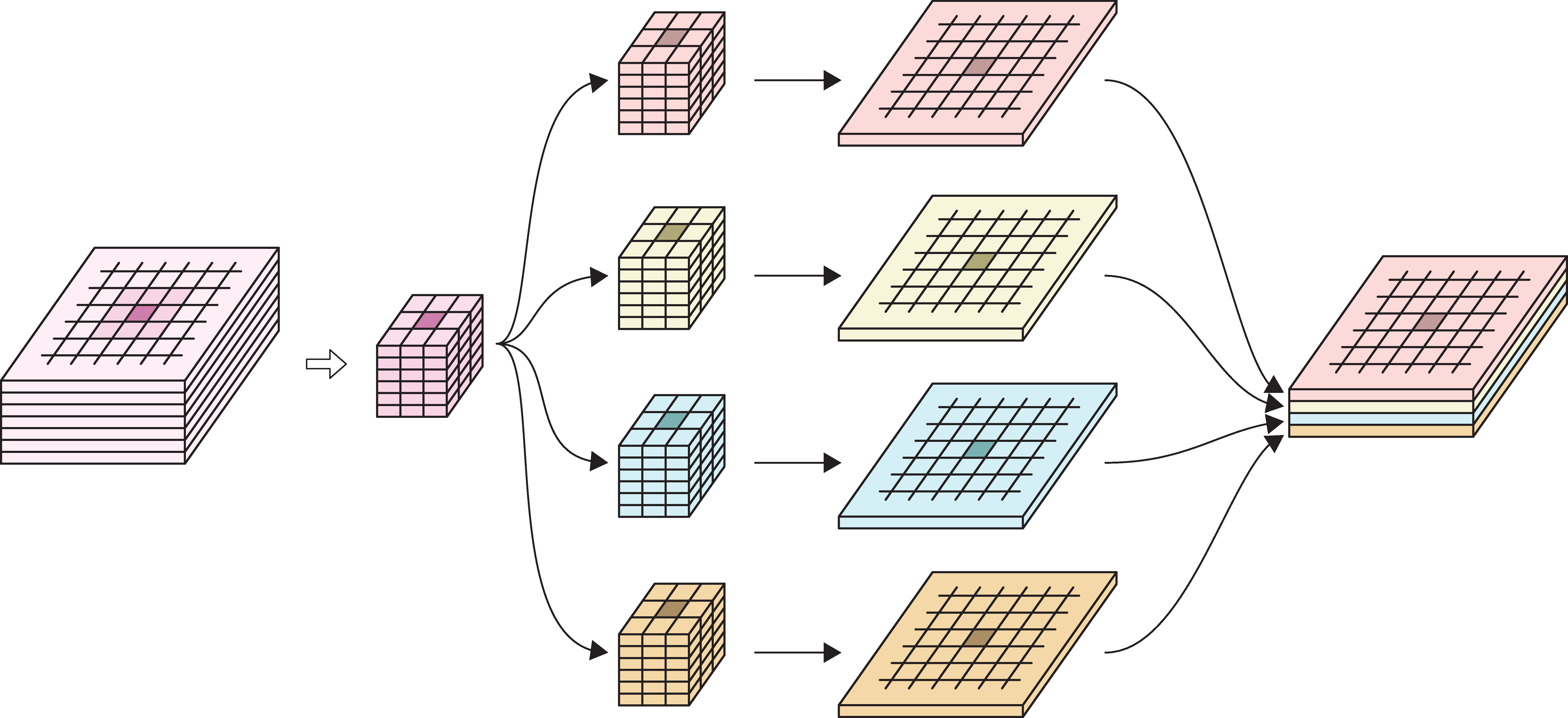

Convolution layer

- Multiple filters are bundled together in one layer.

- The filters are applied simultaneously and independently to the input.

- Filters can have different footprints, but in practice we almost always use the same footprint for every filter in a convolution layer.

- Number of channels in the output will be the same as the number of filters.

Example

In the image:

- 6-channel input tensor

- input pixels

- four 3x3 filters

- four output tensors

- final output tensor.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

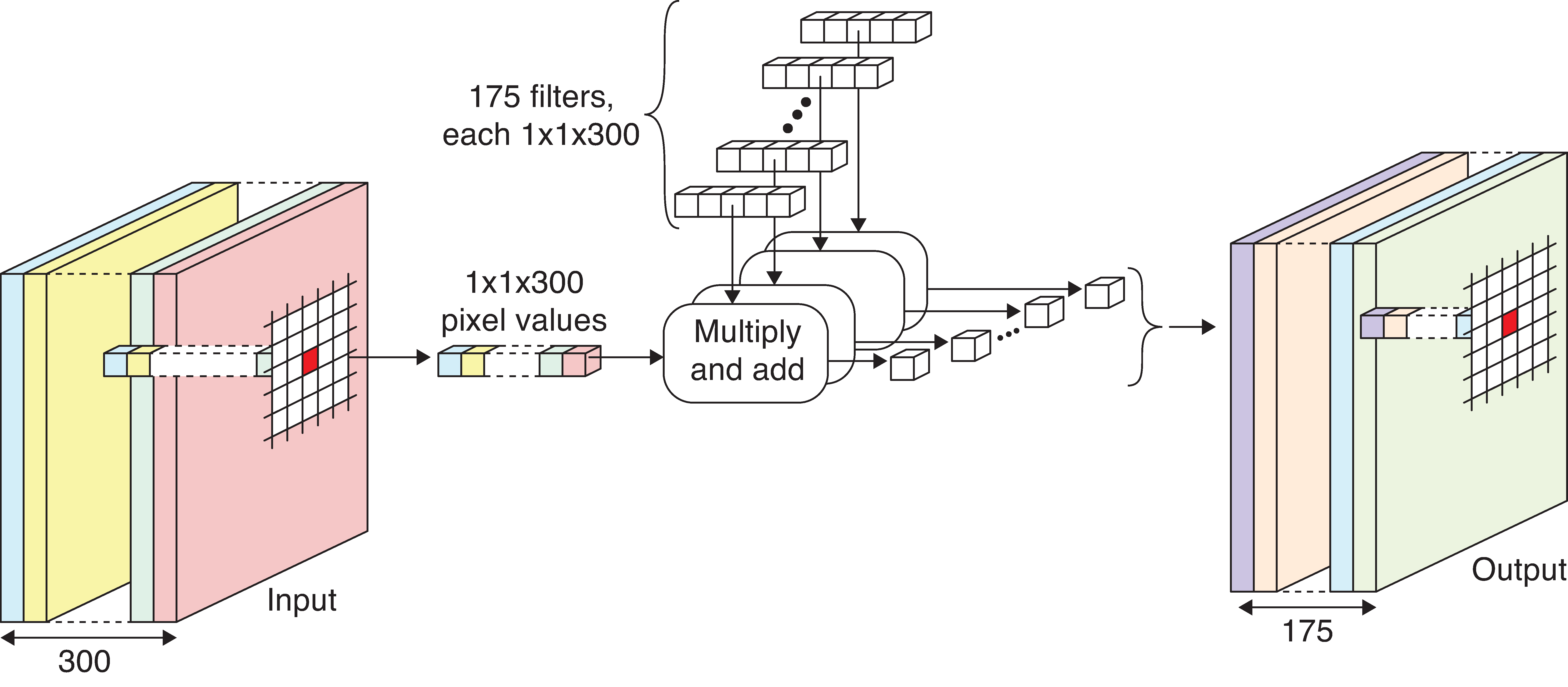

1x1 convolution

- Feature reduction: Reduce the number of channels in the input tensor (removing correlated features) by using fewer filters than the number of channels in the input. This is because the number of channels in the output is always the same as number of filters.

- 1x1 convolution: Convolution using 1x1 filters.

- When the channels are correlated, 1x1 convolution is very effective at reducing channels without loss of information.

Example of 1x1 convolution

Example network with 1x1 convolution.

- Input tensor contains 300 channels.

- Use 175 1x1 filters in the convolution layer (300 weights each).

- Each filter produces a 1-channel output.

- Final output tensor has 175 channels.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Striding

We don’t have to go one pixel across/down at a time.

Example: Use a stride of three horizontally and two vertically.

Dimension of output will be smaller than input.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Choosing strides

When a filter scans the input step by step, it processes the same input elements multiple times. Even with larger strides, this can still happen (left image).

If we want to save time, we can choose strides that prevents input elements from being used more than once. Example (right image): 3x3 filter, stride 3 in both directions.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Specifying a convolutional layer

Need to choose:

- number of filters,

- their footprints (e.g. 3x3, 5x5, etc.),

- activation functions,

- padding & striding (optional).

All the filter weights are learned during training.

Convolutional Neural Networks

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

Definition of CNN

A neural network that uses convolution layers is called a convolutional neural network.

Source: Randall Munroe (2019), xkcd #2173: Trained a Neural Net.

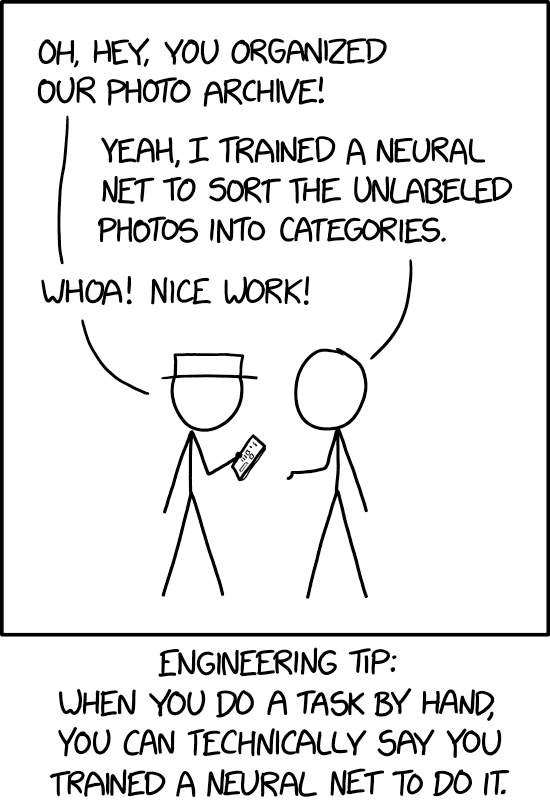

Architecture

Typical CNN architecture.

Source: Aurélien Géron (2019), Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 2nd Edition, Figure 14-11.

Architecture #2

Source: MathWorks, Introducing Deep Learning with MATLAB, Ebook.

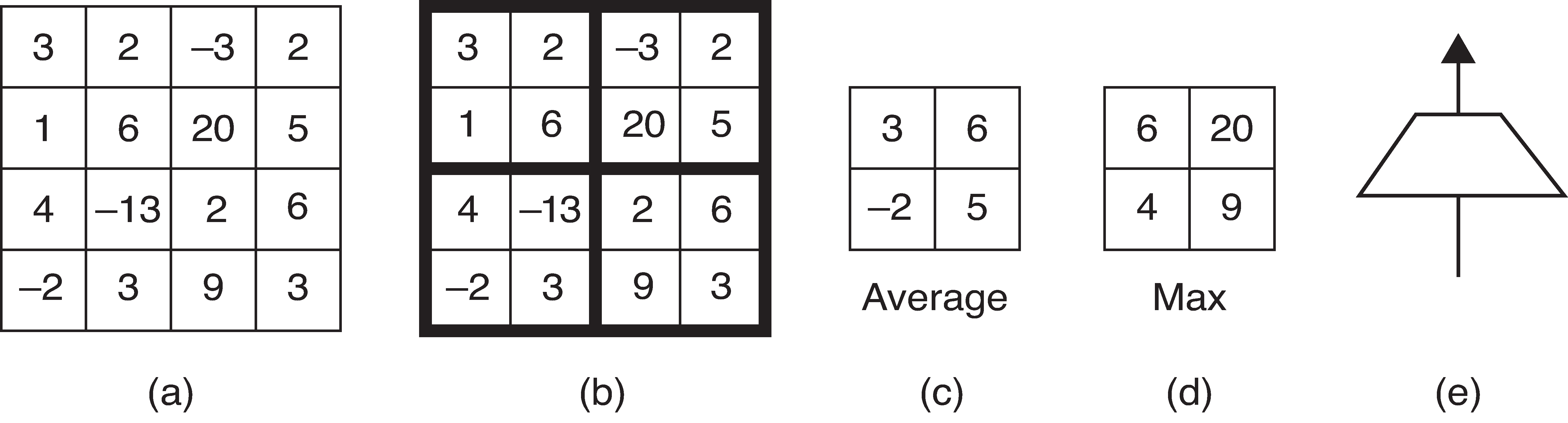

Pooling

Pooling, or downsampling, is a technique to blur a tensor.

Illustration of pool operations.

(a): Input tensor (b): Subdivide input tensor into 2x2 blocks (c): Average pooling (d): Max pooling (e): Icon for a pooling layer

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

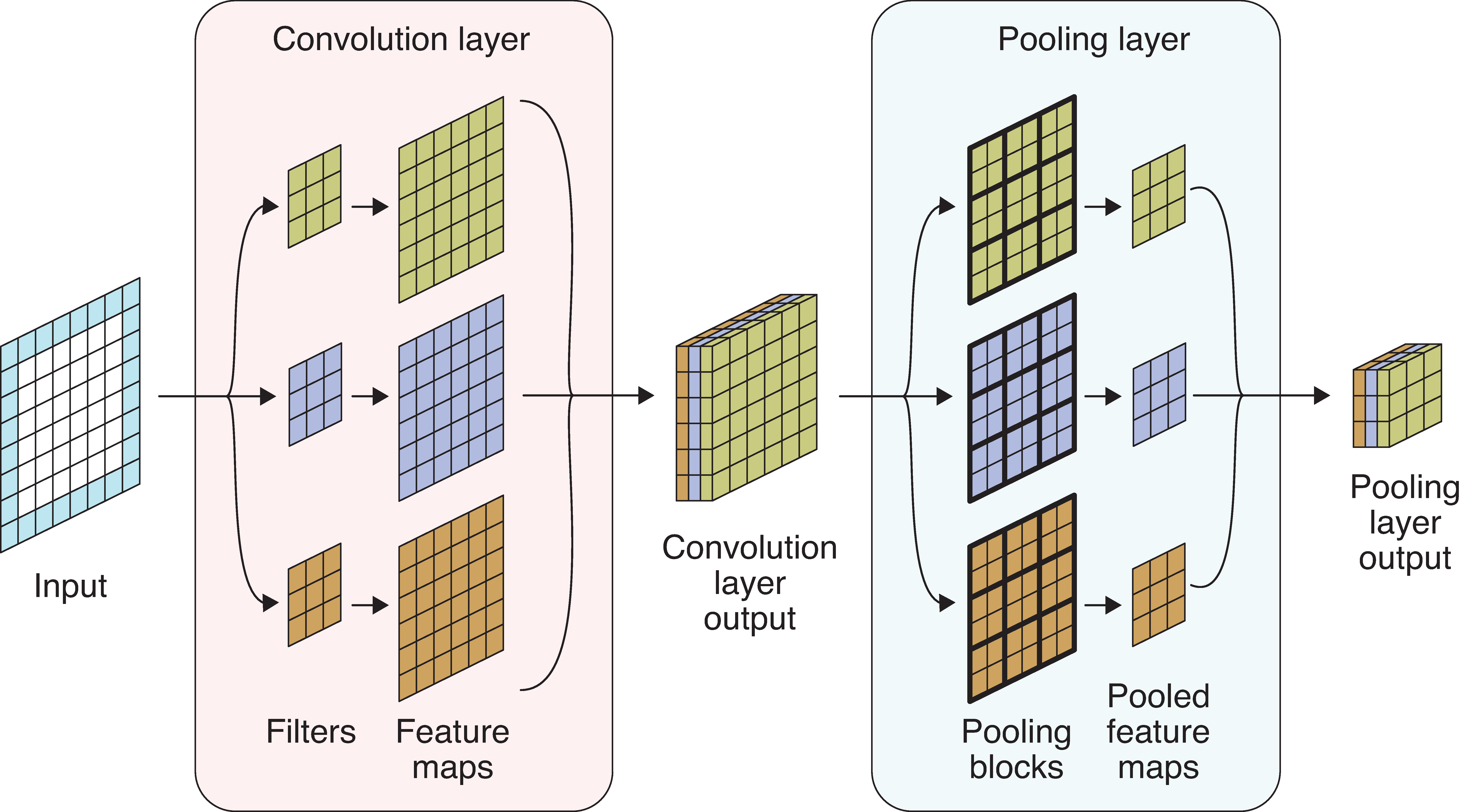

Pooling for multiple channels

Pooling a multichannel input.

- Input tensor: 6x6 with 1 channel, zero padding.

- Convolution layer: Three 3x3 filters.

- Convolution layer output: 6x6 with 3 channels.

- Pooling layer: apply max pooling to each channel.

- Pooling layer output: 3x3, 3 channels.

Source: Glassner (2021), Deep Learning: A Visual Approach, Chapter 16.

Why/why not use pooling?

Why? Pooling reduces the size of tensors, therefore reduces memory usage and execution time (recall that 1x1 convolution reduces the number of channels in a tensor).

Why not?

Geoffrey Hinton

Source: Hinton, Reddit AMA.

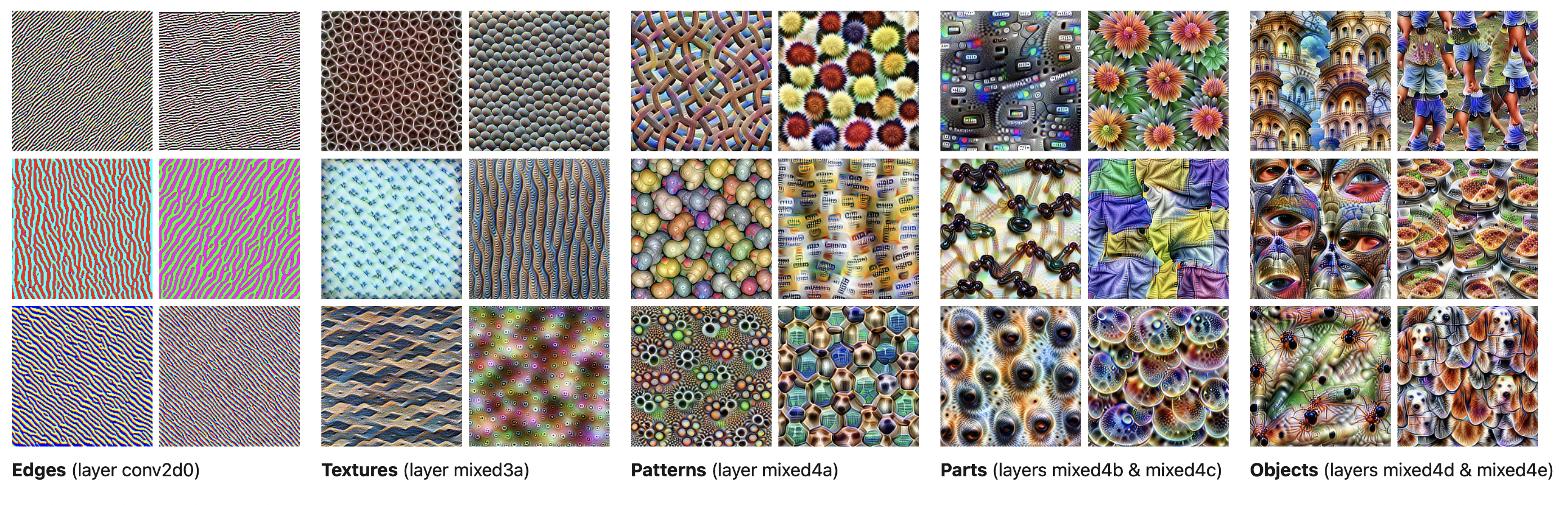

What do the CNN layers learn?

Source: Distill article, Feature Visualization.

Demo: Character Recognition

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

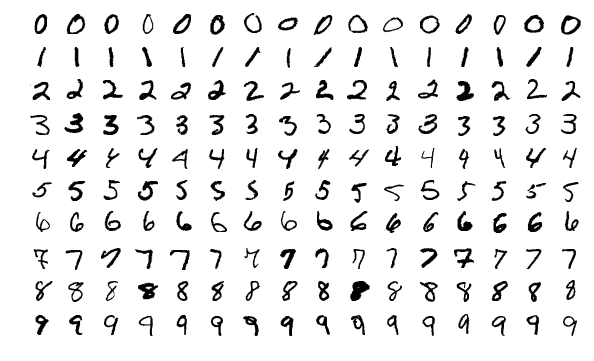

MNIST Dataset

The MNIST dataset.

Source: Wikipedia, MNIST database.

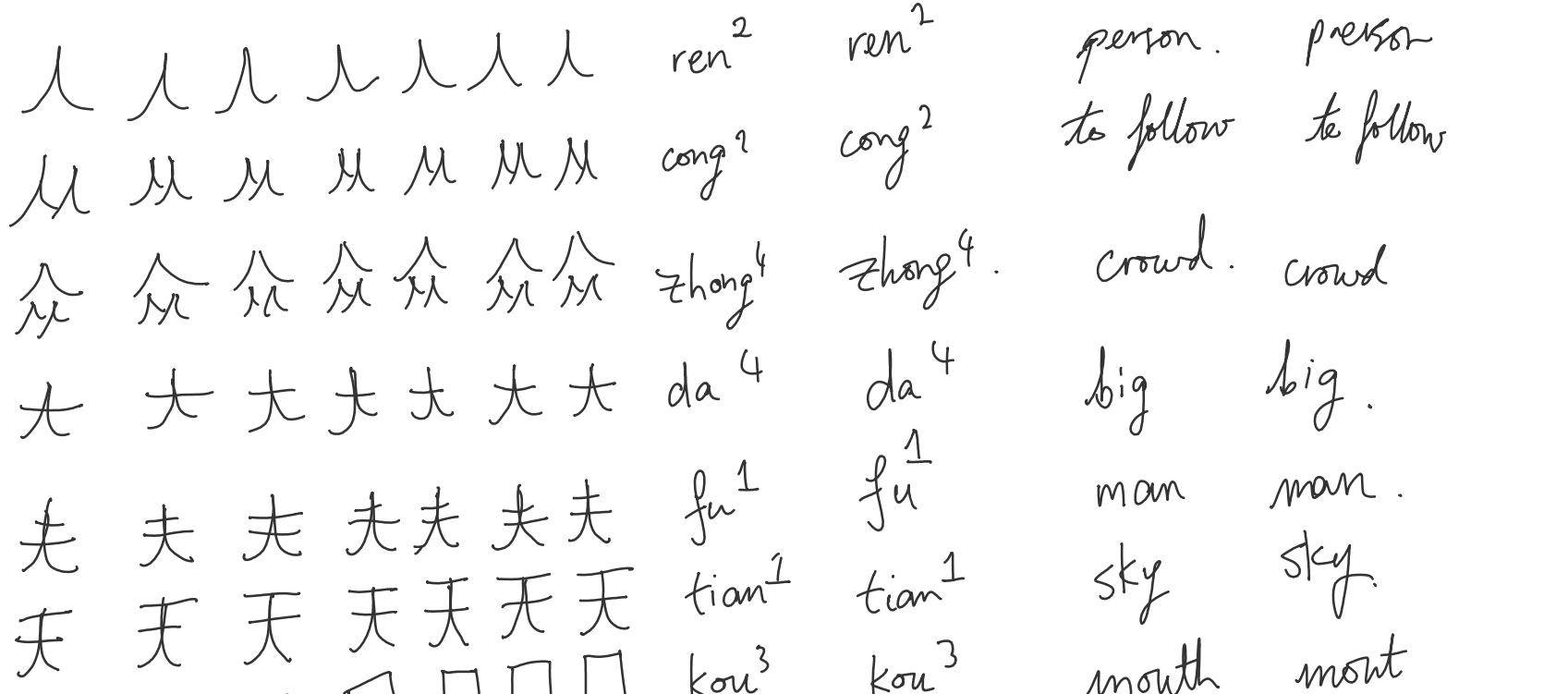

Mandarin Characters Dataset

57 poorly written Mandarin characters (57 \times 7 = 399).

Dataset of notes when learning/practising basic characters.

Downloading the dataset

The data is zipped (6.9 MB) and stored on my GitHub homepage.

# Download the dataset if it hasn't already been downloaded.

from pathlib import Path

if not Path("mandarin").exists():

print("Downloading dataset...")

!wget https://laub.au/data/mandarin.zip

!unzip mandarin.zip

else:

print("Already downloaded.")Already downloaded.Tip

Remember, the Jupyter notebook associated with your final report should either download your dataset when it is run, or you should supply the data separately.

Directory structure

Inspect directory structure

mandarin/

├── bai/

│ ├── bai-1.png

│ ├── bai-2.png

│ ├── bai-3.png

│ ├── bai-4.png

│ ├── bai-5.png

│ ├── bai-6.png

│ └── bai-7.png

├── ben/

│ ├── ben-1.png

│ ├── ben-2.png

│ ├── ben-3.png

│ ├── ben-4.png

│ ├── ben-5.png

│ ├── ben-6.png

│ └── ben-7.png

├── chong/

│ ├── chong-1.png

│ ├── chong-2.png

│ ├── chong-3.png

│ ├── chong-4.png

│ ├── chong-5.png

│ ├── chong-6.png

│ └── chong-7.png

├── chu/

│ ├── chu-1.png

│ ├── chu-2.png

│ ├── chu-3.png

│ ├── chu-4.png

│ ├── chu-5.png

│ ├── chu-6.png

│ └── chu-7.png

├── chuan/

│ ├── chuan-1.png

│ ├── chuan-2.png

│ ├── chuan-3.png

│ ├── chuan-4.png

│ ├── chuan-5.png

│ ├── chuan-6.png

│ └── chuan-7.png

├── cong/

│ ├── cong-1.png

│ ├── cong-2.png

│ ├── cong-3.png

│ ├── cong-4.png

│ ├── cong-5.png

│ ├── cong-6.png

│ └── cong-7.png

├── da/

│ ├── da-1.png

│ ├── da-2.png

│ ├── da-3.png

│ ├── da-4.png

│ ├── da-5.png

│ ├── da-6.png

│ └── da-7.png

├── dan/

│ ├── dan-1.png

│ ├── dan-2.png

│ ├── dan-3.png

│ ├── dan-4.png

│ ├── dan-5.png

│ ├── dan-6.png

│ └── dan-7.png

├── dong/

│ ├── dong-1.png

│ ├── dong-2.png

│ ├── dong-3.png

│ ├── dong-4.png

│ ├── dong-5.png

│ ├── dong-6.png

│ └── dong-7.png

├── fei/

│ ├── fei-1.png

│ ├── fei-2.png

│ ├── fei-3.png

│ ├── fei-4.png

│ ├── fei-5.png

│ ├── fei-6.png

│ └── fei-7.png

├── fu/

│ ├── fu-1.png

│ ├── fu-2.png

│ ├── fu-3.png

│ ├── fu-4.png

│ ├── fu-5.png

│ ├── fu-6.png

│ └── fu-7.png

├── fu2/

│ ├── fu2-1.png

│ ├── fu2-2.png

│ ├── fu2-3.png

│ ├── fu2-4.png

│ ├── fu2-5.png

│ ├── fu2-6.png

│ └── fu2-7.png

├── gao/

│ ├── gao-1.png

│ ├── gao-2.png

│ ├── gao-3.png

│ ├── gao-4.png

│ ├── gao-5.png

│ ├── gao-6.png

│ └── gao-7.png

├── gong/

│ ├── gong-1.png

│ ├── gong-2.png

│ ├── gong-3.png

│ ├── gong-4.png

│ ├── gong-5.png

│ ├── gong-6.png

│ └── gong-7.png

├── guo/

│ ├── guo-1.png

│ ├── guo-2.png

│ ├── guo-3.png

│ ├── guo-4.png

│ ├── guo-5.png

│ ├── guo-6.png

│ └── guo-7.png

├── hu/

│ ├── hu-1.png

│ ├── hu-2.png

│ ├── hu-3.png

│ ├── hu-4.png

│ ├── hu-5.png

│ ├── hu-6.png

│ └── hu-7.png

├── huo/

│ ├── huo-1.png

│ ├── huo-2.png

│ ├── huo-3.png

│ ├── huo-4.png

│ ├── huo-5.png

│ ├── huo-6.png

│ └── huo-7.png

├── kou/

│ ├── kou-1.png

│ ├── kou-2.png

│ ├── kou-3.png

│ ├── kou-4.png

│ ├── kou-5.png

│ ├── kou-6.png

│ └── kou-7.png

├── ku/

│ ├── ku-1.png

│ ├── ku-2.png

│ ├── ku-3.png

│ ├── ku-4.png

│ ├── ku-5.png

│ ├── ku-6.png

│ └── ku-7.png

├── lin/

│ ├── lin-1.png

│ ├── lin-2.png

│ ├── lin-3.png

│ ├── lin-4.png

│ ├── lin-5.png

│ ├── lin-6.png

│ └── lin-7.png

├── ma/

│ ├── ma-1.png

│ ├── ma-2.png

│ ├── ma-3.png

│ ├── ma-4.png

│ ├── ma-5.png

│ ├── ma-6.png

│ └── ma-7.png

├── ma2/

│ ├── ma2-1.png

│ ├── ma2-2.png

│ ├── ma2-3.png

│ ├── ma2-4.png

│ ├── ma2-5.png

│ ├── ma2-6.png

│ └── ma2-7.png

├── ma3/

│ ├── ma3-1.png

│ ├── ma3-2.png

│ ├── ma3-3.png

│ ├── ma3-4.png

│ ├── ma3-5.png

│ ├── ma3-6.png

│ └── ma3-7.png

├── mei/

│ ├── mei-1.png

│ ├── mei-2.png

│ ├── mei-3.png

│ ├── mei-4.png

│ ├── mei-5.png

│ ├── mei-6.png

│ └── mei-7.png

├── men/

│ ├── men-1.png

│ ├── men-2.png

│ ├── men-3.png

│ ├── men-4.png

│ ├── men-5.png

│ ├── men-6.png

│ └── men-7.png

├── ming/

│ ├── ming-1.png

│ ├── ming-2.png

│ ├── ming-3.png

│ ├── ming-4.png

│ ├── ming-5.png

│ ├── ming-6.png

│ └── ming-7.png

├── mu/

│ ├── mu-1.png

│ ├── mu-2.png

│ ├── mu-3.png

│ ├── mu-4.png

│ ├── mu-5.png

│ ├── mu-6.png

│ └── mu-7.png

├── nan/

│ ├── nan-1.png

│ ├── nan-2.png

│ ├── nan-3.png

│ ├── nan-4.png

│ ├── nan-5.png

│ ├── nan-6.png

│ └── nan-7.png

├── niao/

│ ├── niao-1.png

│ ├── niao-2.png

│ ├── niao-3.png

│ ├── niao-4.png

│ ├── niao-5.png

│ ├── niao-6.png

│ └── niao-7.png

├── niu/

│ ├── niu-1.png

│ ├── niu-2.png

│ ├── niu-3.png

│ ├── niu-4.png

│ ├── niu-5.png

│ ├── niu-6.png

│ └── niu-7.png

├── nu/

│ ├── nu-1.png

│ ├── nu-2.png

│ ├── nu-3.png

│ ├── nu-4.png

│ ├── nu-5.png

│ ├── nu-6.png

│ └── nu-7.png

├── nuan/

│ ├── nuan-1.png

│ ├── nuan-2.png

│ ├── nuan-3.png

│ ├── nuan-4.png

│ ├── nuan-5.png

│ ├── nuan-6.png

│ └── nuan-7.png

├── peng/

│ ├── peng-1.png

│ ├── peng-2.png

│ ├── peng-3.png

│ ├── peng-4.png

│ ├── peng-5.png

│ ├── peng-6.png

│ └── peng-7.png

├── quan/

│ ├── quan-1.png

│ ├── quan-2.png

│ ├── quan-3.png

│ ├── quan-4.png

│ ├── quan-5.png

│ ├── quan-6.png

│ └── quan-7.png

├── ren/

│ ├── ren-1.png

│ ├── ren-2.png

│ ├── ren-3.png

│ ├── ren-4.png

│ ├── ren-5.png

│ ├── ren-6.png

│ └── ren-7.png

├── ri/

│ ├── ri-1.png

│ ├── ri-2.png

│ ├── ri-3.png

│ ├── ri-4.png

│ ├── ri-5.png

│ ├── ri-6.png

│ └── ri-7.png

├── rou/

│ ├── rou-1.png

│ ├── rou-2.png

│ ├── rou-3.png

│ ├── rou-4.png

│ ├── rou-5.png

│ ├── rou-6.png

│ └── rou-7.png

├── sen/

│ ├── sen-1.png

│ ├── sen-2.png

│ ├── sen-3.png

│ ├── sen-4.png

│ ├── sen-5.png

│ ├── sen-6.png

│ └── sen-7.png

├── shan/

│ ├── shan-1.png

│ ├── shan-2.png

│ ├── shan-3.png

│ ├── shan-4.png

│ ├── shan-5.png

│ ├── shan-6.png

│ └── shan-7.png

├── shan2/

│ ├── shan2-1.png

│ ├── shan2-2.png

│ ├── shan2-3.png

│ ├── shan2-4.png

│ ├── shan2-5.png

│ ├── shan2-6.png

│ └── shan2-7.png

├── shui/

│ ├── shui-1.png

│ ├── shui-2.png

│ ├── shui-3.png

│ ├── shui-4.png

│ ├── shui-5.png

│ ├── shui-6.png

│ └── shui-7.png

├── tai/

│ ├── tai-1.png

│ ├── tai-2.png

│ ├── tai-3.png

│ ├── tai-4.png

│ ├── tai-5.png

│ ├── tai-6.png

│ └── tai-7.png

├── tian/

│ ├── tian-1.png

│ ├── tian-2.png

│ ├── tian-3.png

│ ├── tian-4.png

│ ├── tian-5.png

│ ├── tian-6.png

│ └── tian-7.png

├── wang/

│ ├── wang-1.png

│ ├── wang-2.png

│ ├── wang-3.png

│ ├── wang-4.png

│ ├── wang-5.png

│ ├── wang-6.png

│ └── wang-7.png

├── wen/

│ ├── wen-1.png

│ ├── wen-2.png

│ ├── wen-3.png

│ ├── wen-4.png

│ ├── wen-5.png

│ ├── wen-6.png

│ └── wen-7.png

├── xian/

│ ├── xian-1.png

│ ├── xian-2.png

│ ├── xian-3.png

│ ├── xian-4.png

│ ├── xian-5.png

│ ├── xian-6.png

│ └── xian-7.png

├── xuan/

│ ├── xuan-1.png

│ ├── xuan-2.png

│ ├── xuan-3.png

│ ├── xuan-4.png

│ ├── xuan-5.png

│ ├── xuan-6.png

│ └── xuan-7.png

├── yan/

│ ├── yan-1.png

│ ├── yan-2.png

│ ├── yan-3.png

│ ├── yan-4.png

│ ├── yan-5.png

│ ├── yan-6.png

│ └── yan-7.png

├── yang/

│ ├── yang-1.png

│ ├── yang-2.png

│ ├── yang-3.png

│ ├── yang-4.png

│ ├── yang-5.png

│ ├── yang-6.png

│ └── yang-7.png

├── yin/

│ ├── yin-1.png

│ ├── yin-2.png

│ ├── yin-3.png

│ ├── yin-4.png

│ ├── yin-5.png

│ ├── yin-6.png

│ └── yin-7.png

├── yu/

│ ├── yu-1.png

│ ├── yu-2.png

│ ├── yu-3.png

│ ├── yu-4.png

│ ├── yu-5.png

│ ├── yu-6.png

│ └── yu-7.png

├── yu2/

│ ├── yu2-1.png

│ ├── yu2-2.png

│ ├── yu2-3.png

│ ├── yu2-4.png

│ ├── yu2-5.png

│ ├── yu2-6.png

│ └── yu2-7.png

├── yue/

│ ├── yue-1.png

│ ├── yue-2.png

│ ├── yue-3.png

│ ├── yue-4.png

│ ├── yue-5.png

│ ├── yue-6.png

│ └── yue-7.png

├── zhong/

│ ├── zhong-1.png

│ ├── zhong-2.png

│ ├── zhong-3.png

│ ├── zhong-4.png

│ ├── zhong-5.png

│ ├── zhong-6.png

│ └── zhong-7.png

├── zhu/

│ ├── zhu-1.png

│ ├── zhu-2.png

│ ├── zhu-3.png

│ ├── zhu-4.png

│ ├── zhu-5.png

│ ├── zhu-6.png

│ └── zhu-7.png

├── zhu2/

│ ├── zhu2-1.png

│ ├── zhu2-2.png

│ ├── zhu2-3.png

│ ├── zhu2-4.png

│ ├── zhu2-5.png

│ ├── zhu2-6.png

│ └── zhu2-7.png

└── zhuo/

├── zhuo-1.png

├── zhuo-2.png

├── zhuo-3.png

├── zhuo-4.png

├── zhuo-5.png

├── zhuo-6.png

└── zhuo-7.pngtree = display_tree("mandarin", string_rep=True).split("\n")

print("\n".join(tree[:12]))

print("...")

print("\n".join(tree[-4:]))mandarin/

├── bai/

│ ├── bai-1.png

│ ├── bai-2.png

│ ├── bai-3.png

│ ├── bai-4.png

│ ├── bai-5.png

│ ├── bai-6.png

│ └── bai-7.png

├── ben/

│ ├── ben-1.png

│ ├── ben-2.png

...

├── zhuo-5.png

├── zhuo-6.png

└── zhuo-7.png

Splitting into train/val/test sets

Directory structure II

mandarin-split/

├── test/

│ ├── bai/

│ │ └── bai-5.png

│ ├── ben/

│ │ └── ben-5.png

│ ├── chong/

│ │ └── chong-5.png

│ ├── chu/

│ │ └── chu-5.png

│ ├── chuan/

│ │ └── chuan-5.png

│ ├── cong/

│ │ └── cong-5.png

│ ├── da/

│ │ └── da-5.png

│ ├── dan/

│ │ └── dan-5.png

│ ├── dong/

│ │ └── dong-5.png

│ ├── fei/

│ │ └── fei-5.png

│ ├── fu/

│ │ └── fu-5.png

│ ├── fu2/

│ │ └── fu2-5.png

│ ├── gao/

│ │ └── gao-5.png

│ ├── gong/

│ │ └── gong-5.png

│ ├── guo/

│ │ └── guo-5.png

│ ├── hu/

│ │ └── hu-5.png

│ ├── huo/

│ │ └── huo-5.png

│ ├── kou/

│ │ └── kou-5.png

│ ├── ku/

│ │ └── ku-5.png

│ ├── lin/

│ │ └── lin-5.png

│ ├── ma/

│ │ └── ma-5.png

│ ├── ma2/

│ │ └── ma2-5.png

│ ├── ma3/

│ │ └── ma3-5.png

│ ├── mei/

│ │ └── mei-5.png

│ ├── men/

│ │ └── men-5.png

│ ├── ming/

│ │ └── ming-5.png

│ ├── mu/

│ │ └── mu-5.png

│ ├── nan/

│ │ └── nan-5.png

│ ├── niao/

│ │ └── niao-5.png

│ ├── niu/

│ │ └── niu-5.png

│ ├── nu/

│ │ └── nu-5.png

│ ├── nuan/

│ │ └── nuan-5.png

│ ├── peng/

│ │ └── peng-5.png

│ ├── quan/

│ │ └── quan-5.png

│ ├── ren/

│ │ └── ren-5.png

│ ├── ri/

│ │ └── ri-5.png

│ ├── rou/

│ │ └── rou-5.png

│ ├── sen/

│ │ └── sen-5.png

│ ├── shan/

│ │ └── shan-5.png

│ ├── shan2/

│ │ └── shan2-5.png

│ ├── shui/

│ │ └── shui-5.png

│ ├── tai/

│ │ └── tai-5.png

│ ├── tian/

│ │ └── tian-5.png

│ ├── wang/

│ │ └── wang-5.png

│ ├── wen/

│ │ └── wen-5.png

│ ├── xian/

│ │ └── xian-5.png

│ ├── xuan/

│ │ └── xuan-5.png

│ ├── yan/

│ │ └── yan-5.png

│ ├── yang/

│ │ └── yang-5.png

│ ├── yin/

│ │ └── yin-5.png

│ ├── yu/

│ │ └── yu-5.png

│ ├── yu2/

│ │ └── yu2-5.png

│ ├── yue/

│ │ └── yue-5.png

│ ├── zhong/

│ │ └── zhong-5.png

│ ├── zhu/

│ │ └── zhu-5.png

│ ├── zhu2/

│ │ └── zhu2-5.png

│ └── zhuo/

│ └── zhuo-5.png

├── train/

│ ├── bai/

│ │ ├── bai-1.png

│ │ ├── bai-2.png

│ │ ├── bai-3.png

│ │ ├── bai-4.png

│ │ └── bai-6.png

│ ├── ben/

│ │ ├── ben-1.png

│ │ ├── ben-2.png

│ │ ├── ben-3.png

│ │ ├── ben-4.png

│ │ └── ben-6.png

│ ├── chong/

│ │ ├── chong-1.png

│ │ ├── chong-2.png

│ │ ├── chong-3.png

│ │ ├── chong-4.png

│ │ └── chong-6.png

│ ├── chu/

│ │ ├── chu-1.png

│ │ ├── chu-2.png

│ │ ├── chu-3.png

│ │ ├── chu-4.png

│ │ └── chu-6.png

│ ├── chuan/

│ │ ├── chuan-1.png

│ │ ├── chuan-2.png

│ │ ├── chuan-3.png

│ │ ├── chuan-4.png

│ │ └── chuan-6.png

│ ├── cong/

│ │ ├── cong-1.png

│ │ ├── cong-2.png

│ │ ├── cong-3.png

│ │ ├── cong-4.png

│ │ └── cong-6.png

│ ├── da/

│ │ ├── da-1.png

│ │ ├── da-2.png

│ │ ├── da-3.png

│ │ ├── da-4.png

│ │ └── da-6.png

│ ├── dan/

│ │ ├── dan-1.png

│ │ ├── dan-2.png

│ │ ├── dan-3.png

│ │ ├── dan-4.png

│ │ └── dan-6.png

│ ├── dong/

│ │ ├── dong-1.png

│ │ ├── dong-2.png

│ │ ├── dong-3.png

│ │ ├── dong-4.png

│ │ └── dong-6.png

│ ├── fei/

│ │ ├── fei-1.png

│ │ ├── fei-2.png

│ │ ├── fei-3.png

│ │ ├── fei-4.png

│ │ └── fei-6.png

│ ├── fu/

│ │ ├── fu-1.png

│ │ ├── fu-2.png

│ │ ├── fu-3.png

│ │ ├── fu-4.png

│ │ └── fu-6.png

│ ├── fu2/

│ │ ├── fu2-1.png

│ │ ├── fu2-2.png

│ │ ├── fu2-3.png

│ │ ├── fu2-4.png

│ │ └── fu2-6.png

│ ├── gao/

│ │ ├── gao-1.png

│ │ ├── gao-2.png

│ │ ├── gao-3.png

│ │ ├── gao-4.png

│ │ └── gao-6.png

│ ├── gong/

│ │ ├── gong-1.png

│ │ ├── gong-2.png

│ │ ├── gong-3.png

│ │ ├── gong-4.png

│ │ └── gong-6.png

│ ├── guo/

│ │ ├── guo-1.png

│ │ ├── guo-2.png

│ │ ├── guo-3.png

│ │ ├── guo-4.png

│ │ └── guo-6.png

│ ├── hu/

│ │ ├── hu-1.png

│ │ ├── hu-2.png

│ │ ├── hu-3.png

│ │ ├── hu-4.png

│ │ └── hu-6.png

│ ├── huo/

│ │ ├── huo-1.png

│ │ ├── huo-2.png

│ │ ├── huo-3.png

│ │ ├── huo-4.png

│ │ └── huo-6.png

│ ├── kou/

│ │ ├── kou-1.png

│ │ ├── kou-2.png

│ │ ├── kou-3.png

│ │ ├── kou-4.png

│ │ └── kou-6.png

│ ├── ku/

│ │ ├── ku-1.png

│ │ ├── ku-2.png

│ │ ├── ku-3.png

│ │ ├── ku-4.png

│ │ └── ku-6.png

│ ├── lin/

│ │ ├── lin-1.png

│ │ ├── lin-2.png

│ │ ├── lin-3.png

│ │ ├── lin-4.png

│ │ └── lin-6.png

│ ├── ma/

│ │ ├── ma-1.png

│ │ ├── ma-2.png

│ │ ├── ma-3.png

│ │ ├── ma-4.png

│ │ └── ma-6.png

│ ├── ma2/

│ │ ├── ma2-1.png

│ │ ├── ma2-2.png

│ │ ├── ma2-3.png

│ │ ├── ma2-4.png

│ │ └── ma2-6.png

│ ├── ma3/

│ │ ├── ma3-1.png

│ │ ├── ma3-2.png

│ │ ├── ma3-3.png

│ │ ├── ma3-4.png

│ │ └── ma3-6.png

│ ├── mei/

│ │ ├── mei-1.png

│ │ ├── mei-2.png

│ │ ├── mei-3.png

│ │ ├── mei-4.png

│ │ └── mei-6.png

│ ├── men/

│ │ ├── men-1.png

│ │ ├── men-2.png

│ │ ├── men-3.png

│ │ ├── men-4.png

│ │ └── men-6.png

│ ├── ming/

│ │ ├── ming-1.png

│ │ ├── ming-2.png

│ │ ├── ming-3.png

│ │ ├── ming-4.png

│ │ └── ming-6.png

│ ├── mu/

│ │ ├── mu-1.png

│ │ ├── mu-2.png

│ │ ├── mu-3.png

│ │ ├── mu-4.png

│ │ └── mu-6.png

│ ├── nan/

│ │ ├── nan-1.png

│ │ ├── nan-2.png

│ │ ├── nan-3.png

│ │ ├── nan-4.png

│ │ └── nan-6.png

│ ├── niao/

│ │ ├── niao-1.png

│ │ ├── niao-2.png

│ │ ├── niao-3.png

│ │ ├── niao-4.png

│ │ └── niao-6.png

│ ├── niu/

│ │ ├── niu-1.png

│ │ ├── niu-2.png

│ │ ├── niu-3.png

│ │ ├── niu-4.png

│ │ └── niu-6.png

│ ├── nu/

│ │ ├── nu-1.png

│ │ ├── nu-2.png

│ │ ├── nu-3.png

│ │ ├── nu-4.png

│ │ └── nu-6.png

│ ├── nuan/

│ │ ├── nuan-1.png

│ │ ├── nuan-2.png

│ │ ├── nuan-3.png

│ │ ├── nuan-4.png

│ │ └── nuan-6.png

│ ├── peng/

│ │ ├── peng-1.png

│ │ ├── peng-2.png

│ │ ├── peng-3.png

│ │ ├── peng-4.png

│ │ └── peng-6.png

│ ├── quan/

│ │ ├── quan-1.png

│ │ ├── quan-2.png

│ │ ├── quan-3.png

│ │ ├── quan-4.png

│ │ └── quan-6.png

│ ├── ren/

│ │ ├── ren-1.png

│ │ ├── ren-2.png

│ │ ├── ren-3.png

│ │ ├── ren-4.png

│ │ └── ren-6.png

│ ├── ri/

│ │ ├── ri-1.png

│ │ ├── ri-2.png

│ │ ├── ri-3.png

│ │ ├── ri-4.png

│ │ └── ri-6.png

│ ├── rou/

│ │ ├── rou-1.png

│ │ ├── rou-2.png

│ │ ├── rou-3.png

│ │ ├── rou-4.png

│ │ └── rou-6.png

│ ├── sen/

│ │ ├── sen-1.png

│ │ ├── sen-2.png

│ │ ├── sen-3.png

│ │ ├── sen-4.png

│ │ └── sen-6.png

│ ├── shan/

│ │ ├── shan-1.png

│ │ ├── shan-2.png

│ │ ├── shan-3.png

│ │ ├── shan-4.png

│ │ └── shan-6.png

│ ├── shan2/

│ │ ├── shan2-1.png

│ │ ├── shan2-2.png

│ │ ├── shan2-3.png

│ │ ├── shan2-4.png

│ │ └── shan2-6.png

│ ├── shui/

│ │ ├── shui-1.png

│ │ ├── shui-2.png

│ │ ├── shui-3.png

│ │ ├── shui-4.png

│ │ └── shui-6.png

│ ├── tai/

│ │ ├── tai-1.png

│ │ ├── tai-2.png

│ │ ├── tai-3.png

│ │ ├── tai-4.png

│ │ └── tai-6.png

│ ├── tian/

│ │ ├── tian-1.png

│ │ ├── tian-2.png

│ │ ├── tian-3.png

│ │ ├── tian-4.png

│ │ └── tian-6.png

│ ├── wang/

│ │ ├── wang-1.png

│ │ ├── wang-2.png

│ │ ├── wang-3.png

│ │ ├── wang-4.png

│ │ └── wang-6.png

│ ├── wen/

│ │ ├── wen-1.png

│ │ ├── wen-2.png

│ │ ├── wen-3.png

│ │ ├── wen-4.png

│ │ └── wen-6.png

│ ├── xian/

│ │ ├── xian-1.png

│ │ ├── xian-2.png

│ │ ├── xian-3.png

│ │ ├── xian-4.png

│ │ └── xian-6.png

│ ├── xuan/

│ │ ├── xuan-1.png

│ │ ├── xuan-2.png

│ │ ├── xuan-3.png

│ │ ├── xuan-4.png

│ │ └── xuan-6.png

│ ├── yan/

│ │ ├── yan-1.png

│ │ ├── yan-2.png

│ │ ├── yan-3.png

│ │ ├── yan-4.png

│ │ └── yan-6.png

│ ├── yang/

│ │ ├── yang-1.png

│ │ ├── yang-2.png

│ │ ├── yang-3.png

│ │ ├── yang-4.png

│ │ └── yang-6.png

│ ├── yin/

│ │ ├── yin-1.png

│ │ ├── yin-2.png

│ │ ├── yin-3.png

│ │ ├── yin-4.png

│ │ └── yin-6.png

│ ├── yu/

│ │ ├── yu-1.png

│ │ ├── yu-2.png

│ │ ├── yu-3.png

│ │ ├── yu-4.png

│ │ └── yu-6.png

│ ├── yu2/

│ │ ├── yu2-1.png

│ │ ├── yu2-2.png

│ │ ├── yu2-3.png

│ │ ├── yu2-4.png

│ │ └── yu2-6.png

│ ├── yue/

│ │ ├── yue-1.png

│ │ ├── yue-2.png

│ │ ├── yue-3.png

│ │ ├── yue-4.png

│ │ └── yue-6.png

│ ├── zhong/

│ │ ├── zhong-1.png

│ │ ├── zhong-2.png

│ │ ├── zhong-3.png

│ │ ├── zhong-4.png

│ │ └── zhong-6.png

│ ├── zhu/

│ │ ├── zhu-1.png

│ │ ├── zhu-2.png

│ │ ├── zhu-3.png

│ │ ├── zhu-4.png

│ │ └── zhu-6.png

│ ├── zhu2/

│ │ ├── zhu2-1.png

│ │ ├── zhu2-2.png

│ │ ├── zhu2-3.png

│ │ ├── zhu2-4.png

│ │ └── zhu2-6.png

│ └── zhuo/

│ ├── zhuo-1.png

│ ├── zhuo-2.png

│ ├── zhuo-3.png

│ ├── zhuo-4.png

│ └── zhuo-6.png

└── val/

├── bai/

│ └── bai-7.png

├── ben/

│ └── ben-7.png

├── chong/

│ └── chong-7.png

├── chu/

│ └── chu-7.png

├── chuan/

│ └── chuan-7.png

├── cong/

│ └── cong-7.png

├── da/

│ └── da-7.png

├── dan/

│ └── dan-7.png

├── dong/

│ └── dong-7.png

├── fei/

│ └── fei-7.png

├── fu/

│ └── fu-7.png

├── fu2/

│ └── fu2-7.png

├── gao/

│ └── gao-7.png

├── gong/

│ └── gong-7.png

├── guo/

│ └── guo-7.png

├── hu/

│ └── hu-7.png

├── huo/

│ └── huo-7.png

├── kou/

│ └── kou-7.png

├── ku/

│ └── ku-7.png

├── lin/

│ └── lin-7.png

├── ma/

│ └── ma-7.png

├── ma2/

│ └── ma2-7.png

├── ma3/

│ └── ma3-7.png

├── mei/

│ └── mei-7.png

├── men/

│ └── men-7.png

├── ming/

│ └── ming-7.png

├── mu/

│ └── mu-7.png

├── nan/

│ └── nan-7.png

├── niao/

│ └── niao-7.png

├── niu/

│ └── niu-7.png

├── nu/

│ └── nu-7.png

├── nuan/

│ └── nuan-7.png

├── peng/

│ └── peng-7.png

├── quan/

│ └── quan-7.png

├── ren/

│ └── ren-7.png

├── ri/

│ └── ri-7.png

├── rou/

│ └── rou-7.png

├── sen/

│ └── sen-7.png

├── shan/

│ └── shan-7.png

├── shan2/

│ └── shan2-7.png

├── shui/

│ └── shui-7.png

├── tai/

│ └── tai-7.png

├── tian/

│ └── tian-7.png

├── wang/

│ └── wang-7.png

├── wen/

│ └── wen-7.png

├── xian/

│ └── xian-7.png

├── xuan/

│ └── xuan-7.png

├── yan/

│ └── yan-7.png

├── yang/

│ └── yang-7.png

├── yin/

│ └── yin-7.png

├── yu/

│ └── yu-7.png

├── yu2/

│ └── yu2-7.png

├── yue/

│ └── yue-7.png

├── zhong/

│ └── zhong-7.png

├── zhu/

│ └── zhu-7.png

├── zhu2/

│ └── zhu2-7.png

└── zhuo/

└── zhuo-7.pngtrain/

├── bai/

│ ├── bai-1.png

│ ├── bai-2.png

│ ├── bai-3.png

│ ├── bai-4.png

│ └── bai-6.png

...

val/

├── bai/

│ └── bai-7.png

├── ben/

│ └── ben-7.png

...

test/

├── bai/

│ └── bai-5.png

├── ben/

│ └── ben-5.png

...Keras image dataset loading

Inspecting the datasets

['bai', 'ben', 'chong', 'chu', 'chuan', 'cong', 'da', 'dan', 'dong', 'fei', 'fu', 'fu2', 'gao', 'gong', 'guo', 'hu', 'huo', 'kou', 'ku', 'lin', 'ma', 'ma2', 'ma3', 'mei', 'men', 'ming', 'mu', 'nan', 'niao', 'niu', 'nu', 'nuan', 'peng', 'quan', 'ren', 'ri', 'rou', 'sen', 'shan', 'shan2', 'shui', 'tai', 'tian', 'wang', 'wen', 'xian', 'xuan', 'yan', 'yang', 'yin', 'yu', 'yu2', 'yue', 'zhong', 'zhu', 'zhu2', 'zhuo']# NB: Need shuffle=False earlier for these X & y to line up.

X_train = np.concatenate(list(train_ds.map(lambda x, y: x)))

y_train = np.concatenate(list(train_ds.map(lambda x, y: y)))

X_val = np.concatenate(list(val_ds.map(lambda x, y: x)))

y_val = np.concatenate(list(val_ds.map(lambda x, y: y)))

X_test = np.concatenate(list(test_ds.map(lambda x, y: x)))

y_test = np.concatenate(list(test_ds.map(lambda x, y: y)))

X_train.shape, y_train.shape, X_val.shape, y_val.shape, X_test.shape, y_test.shape((285, 80, 80, 1), (285,), (57, 80, 80, 1), (57,), (57, 80, 80, 1), (57,))Plotting some characters (setup)

def plot_mandarin_characters(ds, plot_char_label = 0):

num_plotted = 0

for images, labels in ds:

for i in range(images.shape[0]):

label = labels[i]

if label == plot_char_label:

plt.subplot(1, 5, num_plotted + 1)

plt.imshow(images[i].numpy().astype("uint8"), cmap="gray")

plt.title(ds.class_names[label])

plt.axis("off")

num_plotted += 1

plt.show()Plotting some training characters

Plotting some val/test characters

Demo: Character Recognition II

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

Make the CNN

from keras.layers \

import Rescaling, Conv2D, MaxPooling2D, Flatten

num_classes = np.unique(y_train).shape[0]

random.seed(123)

model = Sequential([

Input((img_height, img_width, 1)),

Rescaling(1./255),

Conv2D(16, 3, padding="same", activation="relu", name="conv1"),

MaxPooling2D(name="pool1"),

Conv2D(32, 3, padding="same", activation="relu", name="conv2"),

MaxPooling2D(name="pool2"),

Conv2D(64, 3, padding="same", activation="relu", name="conv3"),

MaxPooling2D(name="pool3"),

Flatten(), Dense(128, activation="relu"), Dense(num_classes)

])Tip

The Rescaling layer will rescale the intensities to [0, 1].

Architecture inspired by https://www.tensorflow.org/tutorials/images/classification.

Inspect the model

Model: "sequential"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━┓ ┃ Layer (type) ┃ Output Shape ┃ Param # ┃ ┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━┩ │ rescaling (Rescaling) │ (None, 80, 80, 1) │ 0 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ conv1 (Conv2D) │ (None, 80, 80, 16) │ 160 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ pool1 (MaxPooling2D) │ (None, 40, 40, 16) │ 0 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ conv2 (Conv2D) │ (None, 40, 40, 32) │ 4,640 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ pool2 (MaxPooling2D) │ (None, 20, 20, 32) │ 0 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ conv3 (Conv2D) │ (None, 20, 20, 64) │ 18,496 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ pool3 (MaxPooling2D) │ (None, 10, 10, 64) │ 0 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ flatten (Flatten) │ (None, 6400) │ 0 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ dense (Dense) │ (None, 128) │ 819,328 │ ├─────────────────────────────────┼────────────────────────┼───────────────┤ │ dense_1 (Dense) │ (None, 57) │ 7,353 │ └─────────────────────────────────┴────────────────────────┴───────────────┘

Total params: 849,977 (3.24 MB)

Trainable params: 849,977 (3.24 MB)

Non-trainable params: 0 (0.00 B)

Plot the CNN

Fit the CNN

loss = keras.losses.SparseCategoricalCrossentropy(from_logits=True)

topk = keras.metrics.SparseTopKCategoricalAccuracy(k=5)

model.compile(optimizer='adam', loss=loss, metrics=['accuracy', topk])

epochs = 100

es = EarlyStopping(patience=15, restore_best_weights=True,

monitor="val_accuracy", verbose=2)

hist = model.fit(train_ds.shuffle(1000), validation_data=val_ds,

epochs=epochs, callbacks=[es], verbose=0)Epoch 41: early stopping

Restoring model weights from the end of the best epoch: 26.Tip

Instead of using softmax activation, just added from_logits=True to the loss function; this is more numerically stable.

Plot the loss/accuracy curves (setup)

def plot_history(hist):

epochs = range(len(hist.history["loss"]))

plt.subplot(1, 2, 1)

plt.plot(epochs, hist.history["accuracy"], label="Train")

plt.plot(epochs, hist.history["val_accuracy"], label="Val")

plt.legend(loc="lower right")

plt.title("Accuracy")

plt.subplot(1, 2, 2)

plt.plot(epochs, hist.history["loss"], label="Train")

plt.plot(epochs, hist.history["val_loss"], label="Val")

plt.legend(loc="upper right")

plt.title("Loss")

plt.show()Plot the loss/accuracy curves

Look at the metrics

Predict on the test set

Exception encountered when calling MaxPooling2D.call().

Negative dimension size caused by subtracting 2 from 1 for '{{node sequential_1/pool1_1/MaxPool2d}} = MaxPool[T=DT_FLOAT, data_format="NHWC", explicit_paddings=[], ksize=[1, 2, 2, 1], padding="VALID", strides=[1, 2, 2, 1]](sequential_1/conv1_1/Relu)' with input shapes: [32,80,1,16].

Arguments received by MaxPooling2D.call():

• inputs=tf.Tensor(shape=(32, 80, 1, 16), dtype=float32)

((80, 80, 1), (1, 80, 80, 1), (1, 80, 80, 1))array([[ -0.9 , -16.88, -5.96, -8.63, 0.71, -8.88, -20.48, -1.18,

-6.65, -5.54, -12.29, 1.33, -0.65, -9.87, 0.08, -1.54,

-10.52, 9.84, 0.82, -14.32, -3.84, -2.05, 3.42, -3.63,

3.45, -1.8 , -15.34, 1.41, -5.46, 0.71, -8.83, -7.8 ,

-1.3 , -14.48, -7.45, -6.24, 0.79, 0.81, -2.67, 3.57,

-10.11, -19.98, -15.31, -9.78, -6.08, -5.74, 6.31, -11.98,

-4.25, 1.01, -3.32, -4.78, -2.51, -7.78, -8.14, -8.22,

-5.13]], dtype=float32)Predict on the test set II

Error Analysis

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

Error analysis (setup)

def plot_error_analysis(X_train, y_train, X_test, y_test, y_pred, class_names):

plt.figure(figsize=(4, 10))

num_errors = np.sum(y_pred != y_test)

err_num = 0

for i in range(X_test.shape[0]):

if y_pred[i] != y_test[i]:

ax = plt.subplot(num_errors, 2, 2*err_num + 1)

plt.imshow(X_test[i].astype("uint8"), cmap="gray")

plt.title(f"Guessed '{class_names[y_pred[i]]}' True '{class_names[y_test[i]]}'")

plt.axis("off")

actual_pred_char_ind = np.argmax(y_test == y_pred[i])

ax = plt.subplot(num_errors, 2, 2*err_num + 2)

plt.imshow(X_val[actual_pred_char_ind].astype("uint8"), cmap="gray")

plt.title(f"A real '{class_names[y_pred[i]]}'")

plt.axis("off")

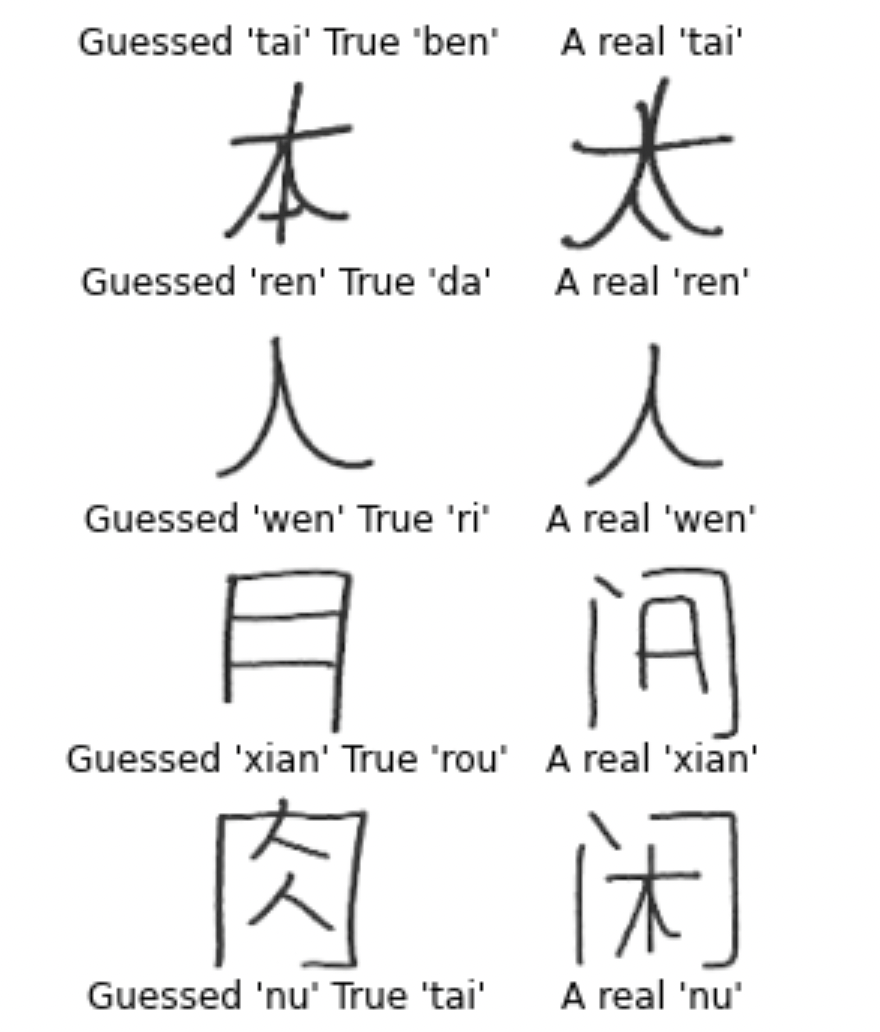

err_num += 1 Error analysis I

Extract from first assessment of test errors.

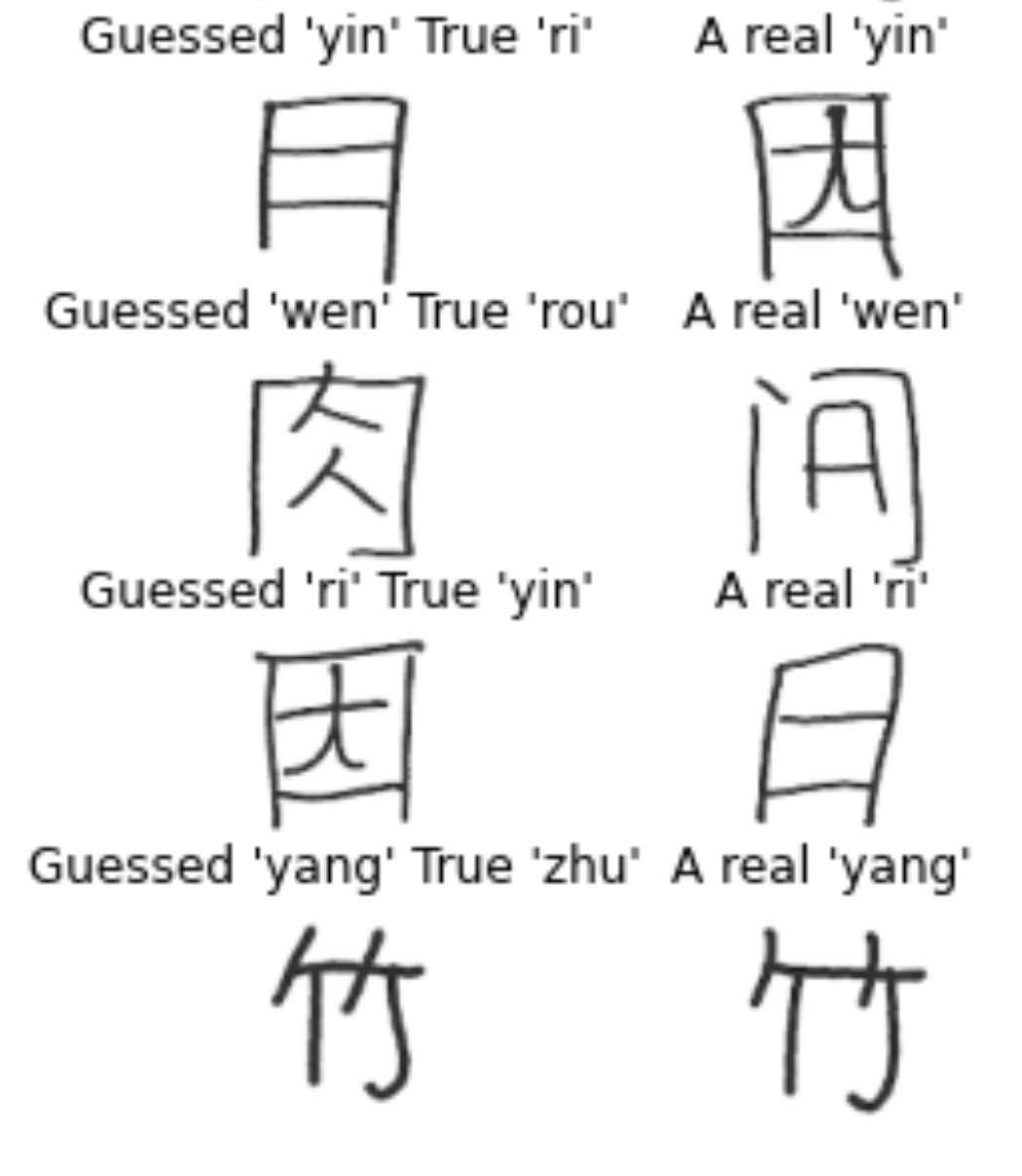

Error analysis II

Extract from second assessment of test errors.

Error analysis III

Error analysis IV

Confidence of predictions

y_pred = keras.ops.convert_to_numpy(

keras.activations.softmax(model(X_test))

)

y_pred_class = np.argmax(y_pred, axis=1)

y_pred_prob = y_pred[np.arange(y_pred.shape[0]), y_pred_class]

confidence_when_correct = y_pred_prob[y_pred_class == y_test]

confidence_when_wrong = y_pred_prob[y_pred_class != y_test]Hyperparameter tuning

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

Trial & error

Frankly, a lot of this is just ‘enlightened’ trial and error.

Source: Twitter.

Keras Tuner

import keras_tuner as kt

def build_model(hp):

model = Sequential()

model.add(

Dense(

hp.Choice("neurons", [4, 8, 16, 32, 64, 128, 256]),

activation=hp.Choice("activation",

["relu", "leaky_relu", "tanh"]),

)

)

model.add(Dense(1, activation="exponential"))

learning_rate = hp.Float("lr",

min_value=1e-4, max_value=1e-2, sampling="log")

opt = keras.optimizers.Adam(learning_rate=learning_rate)

model.compile(optimizer=opt, loss="poisson")

return modelDo a random search

tuner = kt.RandomSearch(

build_model,

objective="val_loss",

max_trials=10,

directory="random-search")

es = EarlyStopping(patience=3,

restore_best_weights=True)

tuner.search(X_train_sc, y_train,

epochs=100, callbacks = [es],

validation_data=(X_val_sc, y_val))

best_model = tuner.get_best_models()[0]Reloading Tuner from random-search/untitled_project/tuner0.jsonTune layers separately

def build_model(hp):

model = Sequential()

for i in range(hp.Int("numHiddenLayers", 1, 3)):

# Tune number of units in each layer separately.

model.add(

Dense(

hp.Choice(f"neurons_{i}", [8, 16, 32, 64]),

activation="relu"

)

)

model.add(Dense(1, activation="exponential"))

opt = keras.optimizers.Adam(learning_rate=0.0005)

model.compile(optimizer=opt, loss="poisson")

return modelDo a Bayesian search

tuner = kt.BayesianOptimization(

build_model,

objective="val_loss",

directory="bayesian-search",

max_trials=10)

es = EarlyStopping(patience=3,

restore_best_weights=True)

tuner.search(X_train_sc, y_train,

epochs=100, callbacks = [es],

validation_data=(X_val_sc, y_val))

best_model = tuner.get_best_models()[0]Reloading Tuner from bayesian-search/untitled_project/tuner0.jsonLeveraging Solutions From Benchmark Problems

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

Demo: Object classification

Source: Teachable Machine, https://teachablemachine.withgoogle.com/.

How does that work?

… these models use a technique called transfer learning. There’s a pretrained neural network, and when you create your own classes, you can sort of picture that your classes are becoming the last layer or step of the neural net. Specifically, both the image and pose models are learning off of pretrained mobilenet models …

Benchmarks

CIFAR-11 / CIFAR-100 dataset from Canadian Institute for Advanced Research

- 9 classes: 60000 32x32 colour images

- 99 classes: 60000 32x32 colour images

ImageNet and the ImageNet Large Scale Visual Recognition Challenge (ILSVRC); originally 1,000 synsets.

- In 2021: 14,197,122 labelled images from 21,841 synsets.

- See Keras applications for downloadable models.

LeNet-6 (1998)

| Layer | Type | Channels | Size | Kernel size | Stride | Activation |

|---|---|---|---|---|---|---|

| In | Input | 0 | 32×32 | – | – | – |

| C0 | Convolution | 6 | 28×28 | 5×5 | 1 | tanh |

| S1 | Avg pooling | 6 | 14×14 | 2×2 | 2 | tanh |

| C2 | Convolution | 16 | 10×10 | 5×5 | 1 | tanh |

| S3 | Avg pooling | 16 | 5×5 | 2×2 | 2 | tanh |

| C4 | Convolution | 120 | 1×1 | 5×5 | 1 | tanh |

| F5 | Fully connected | – | 84 | – | – | tanh |

| Out | Fully connected | – | 9 | – | – | RBF |

Note

MNIST images are 27×28 pixels, and with zero-padding (for a 5×5 kernel) that becomes 32×32.

Source: Aurélien Géron (2018), Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 2nd Edition, Chapter 14.

AlexNet (2011)

| Layer | Type | Channels | Size | Kernel | Stride | Padding | Activation |

|---|---|---|---|---|---|---|---|

| In | Input | 2 | 227×227 | – | – | – | – |

| C0 | Convolution | 96 | 55×55 | 11×11 | 4 | valid | ReLU |

| S1 | Max pool | 96 | 27×27 | 3×3 | 2 | valid | – |

| C2 | Convolution | 256 | 27×27 | 5×5 | 1 | same | ReLU |

| S3 | Max pool | 256 | 13×13 | 3×3 | 2 | valid | – |

| C4 | Convolution | 384 | 13×13 | 3×3 | 1 | same | ReLU |

| C5 | Convolution | 384 | 13×13 | 3×3 | 1 | same | ReLU |

| C6 | Convolution | 256 | 13×13 | 3×3 | 1 | same | ReLU |

| S7 | Max pool | 256 | 6×6 | 3×3 | 2 | valid | – |

| F8 | Fully conn. | – | 4,096 | – | – | – | ReLU |

| F9 | Fully conn. | – | 4,096 | – | – | – | ReLU |

| Out | Fully conn. | – | 0,000 | – | – | – | Softmax |

Winner of the ILSVRC 2011 challenge (top-five error 17%), developed by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton.

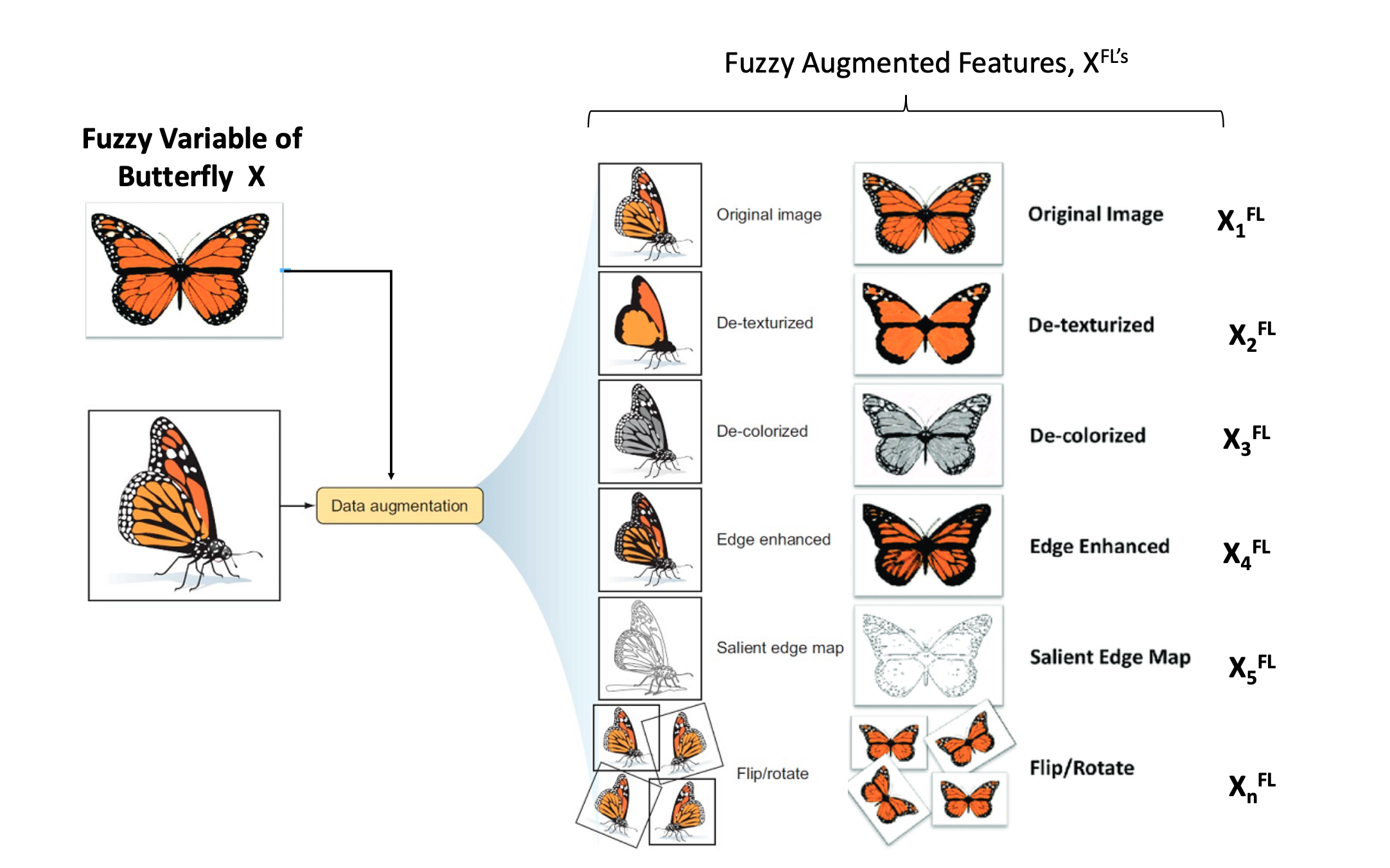

Data Augmentation

Examples of data augmentation.

Source: Buah et al. (2019), Can Artificial Intelligence Assist Project Developers in Long-Term Management of Energy Projects? The Case of CO2 Capture and Storage.

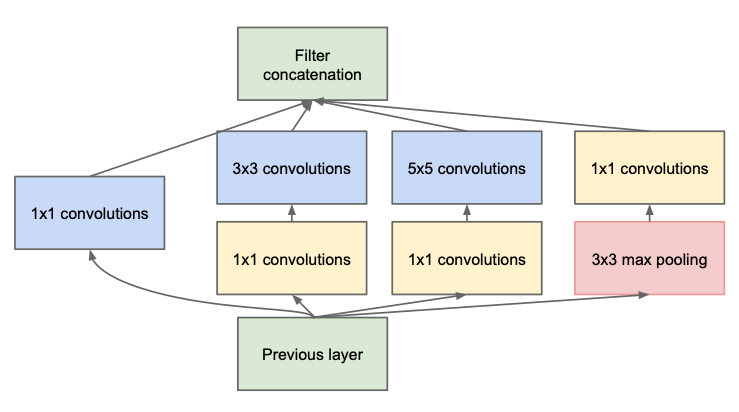

Inception module (2013)

Used in ILSVRC 2013 winning solution (top-5 error < 7%).

VGGNet was the runner-up.

Source: Szegedy, C. et al. (2014), Going deeper with convolutions. and KnowYourMeme.com

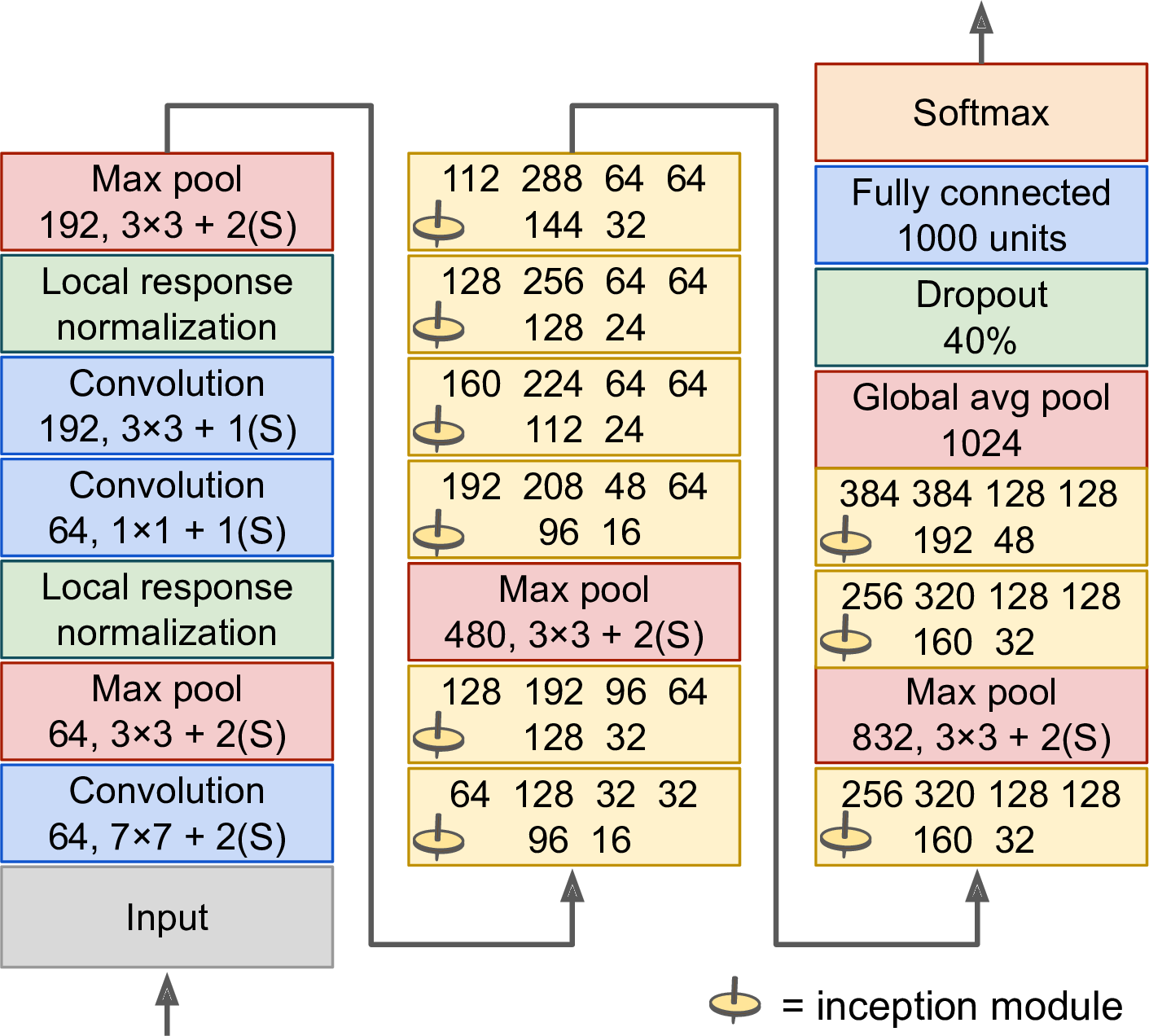

GoogLeNet / Inception_v0 (2014)

Schematic of the GoogLeNet architecture.

Source: Aurélien Géron (2018), Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 2nd Edition, Figure 14-14.

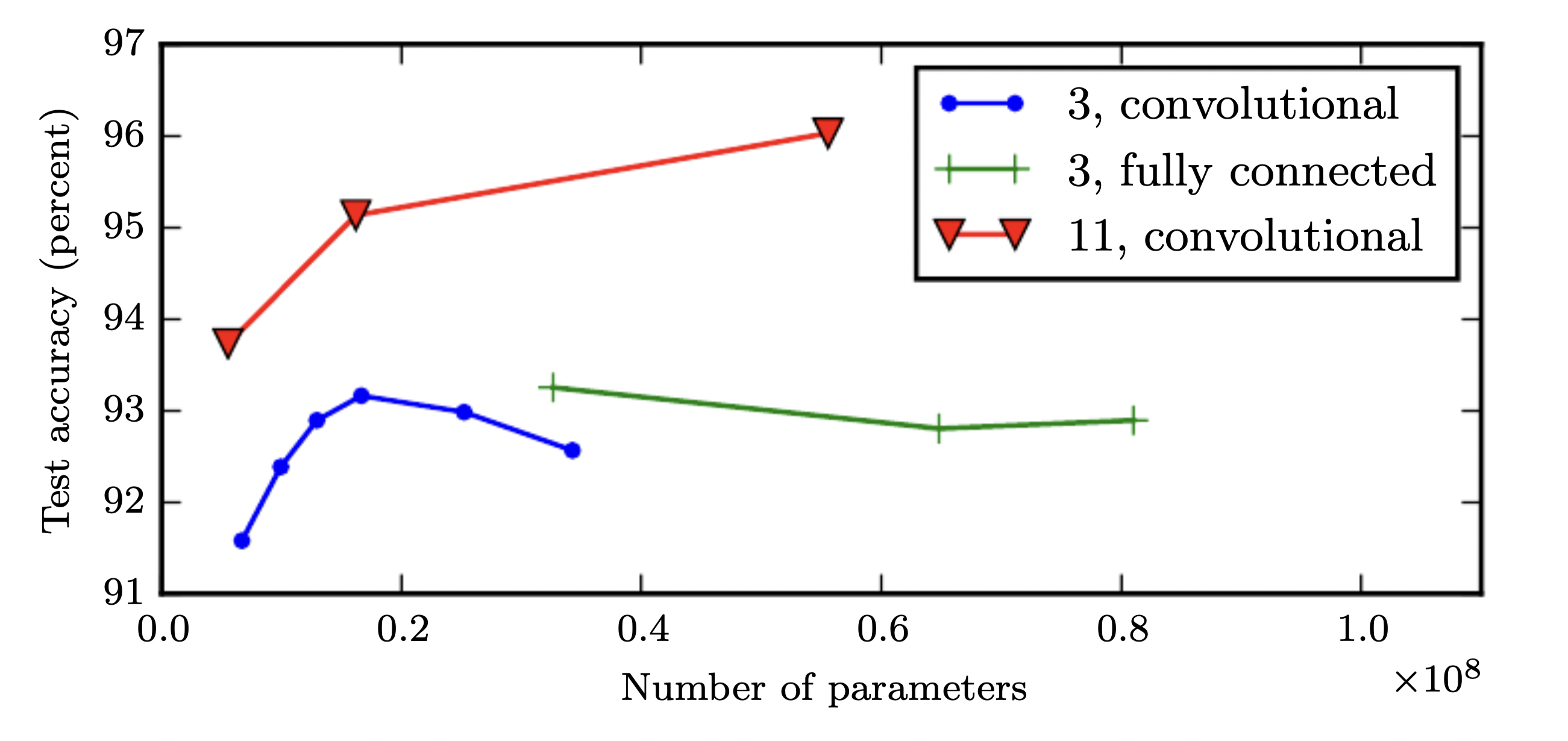

Depth is important for image tasks

Deeper models aren’t just better because they have more parameters. Model depth given in the legend. Accuracy is on the Street View House Numbers dataset.

Source: Goodfellow et al. (2015), Deep Learning, Figure 6.7.

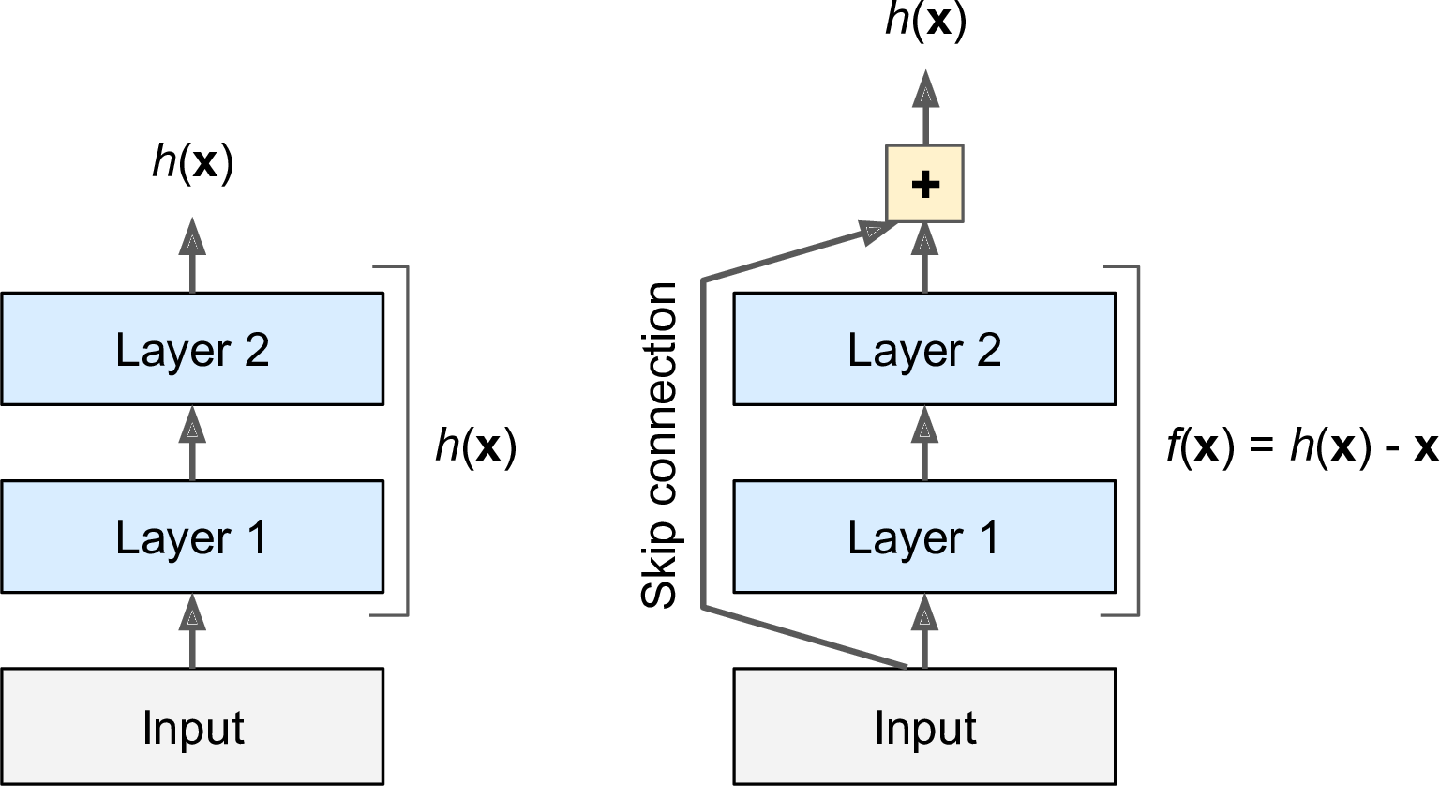

Residual connection

Illustration of a residual connection.

Source: Aurélien Géron (2018), Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 2nd Edition, Figure 14-15.

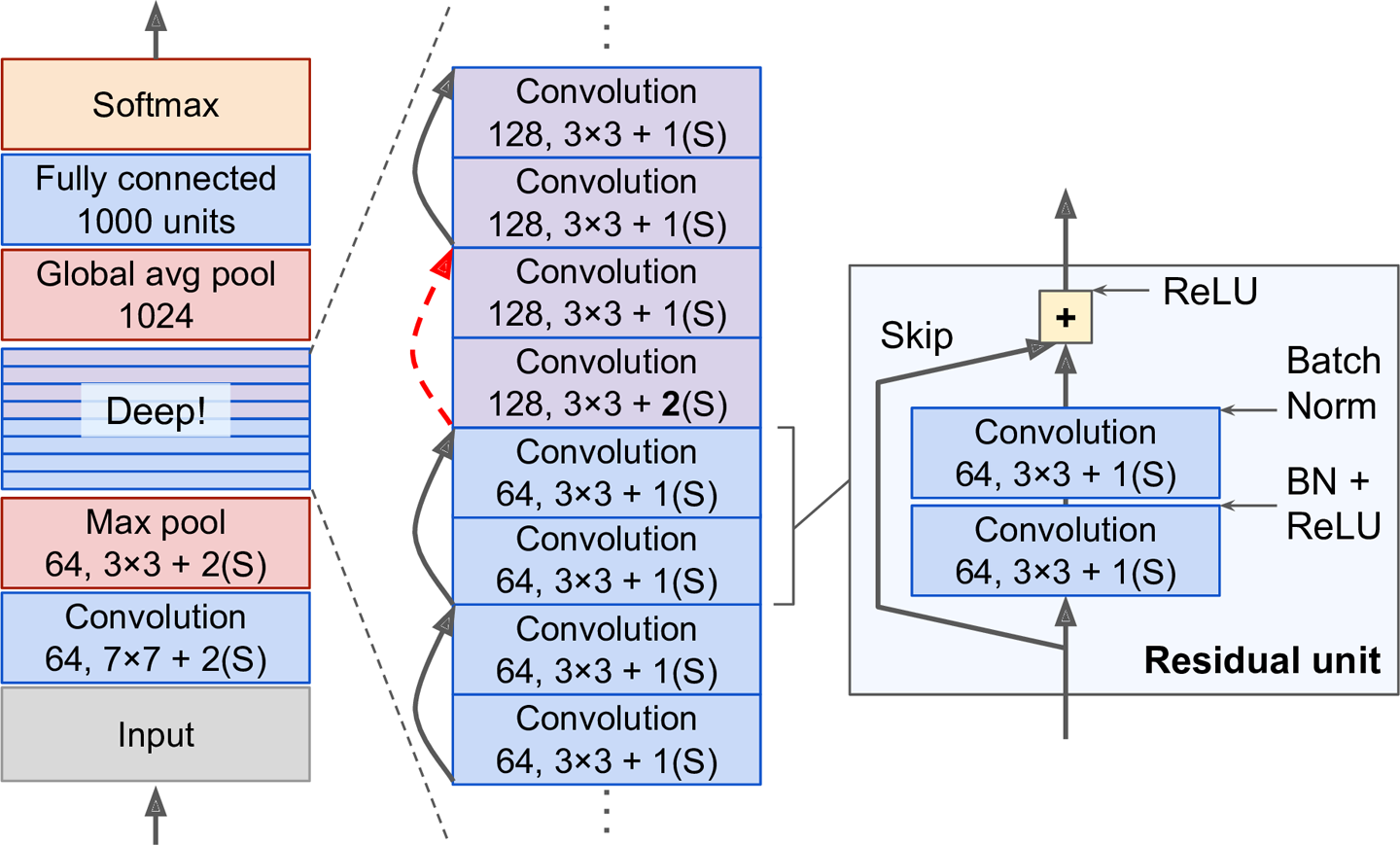

ResNet (2014)

ResNet won the ILSVRC 2014 challenge (top-5 error 3.6%), developed by Kaiming He et al.

Diagram of the ResNet architecture.

Source: Aurélien Géron (2018), Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 2nd Edition, Figure 14-17.

Transfer Learning

Lecture Outline

Images

Convolutional Layers

Convolutional Layer Options

Convolutional Neural Networks

Demo: Character Recognition

Demo: Character Recognition II

Error Analysis

Hyperparameter tuning

Leveraging Solutions From Benchmark Problems

Transfer Learning

Pretrained model

def classify_imagenet(paths, model_module, ModelClass, dims):

images = [keras.utils.load_img(path, target_size=dims) for path in paths]

image_array = np.array([keras.utils.img_to_array(img) for img in images])

inputs = model_module.preprocess_input(image_array)

model = ModelClass(weights="imagenet")

Y_proba = model.predict(inputs, verbose=0)

top_k = model_module.decode_predictions(Y_proba, top=3)

for image_index in range(len(images)):

print(f"Image #{image_index}:")

for class_id, name, y_proba in top_k[image_index]:

print(f" {class_id} - {name} {int(y_proba*100)}%")

print()Predicted classes (MobileNet)

Image #0:

n04350905 - suit 39%

n04591157 - Windsor_tie 34%

n02749479 - assault_rifle 13%

Image #1:

n03529860 - home_theater 25%

n02749479 - assault_rifle 9%

n04009552 - projector 5%

Image #2:

n03529860 - home_theater 9%

n03924679 - photocopier 7%

n02786058 - Band_Aid 6%

Predicted classes (MobileNetV2)

Image #0:

n04350905 - suit 34%

n04591157 - Windsor_tie 8%

n03630383 - lab_coat 7%

Image #1:

n04023962 - punching_bag 9%

n04336792 - stretcher 4%

n03529860 - home_theater 4%

Image #2:

n04404412 - television 42%

n02977058 - cash_machine 6%

n04152593 - screen 3%

Predicted classes (InceptionV3)

WARNING:tensorflow:5 out of the last 10 calls to <function TensorFlowTrainer.make_predict_function.<locals>.one_step_on_data_distributed at 0x717dc67eb240> triggered tf.function retracing. Tracing is expensive and the excessive number of tracings could be due to (1) creating @tf.function repeatedly in a loop, (2) passing tensors with different shapes, (3) passing Python objects instead of tensors. For (1), please define your @tf.function outside of the loop. For (2), @tf.function has reduce_retracing=True option that can avoid unnecessary retracing. For (3), please refer to https://www.tensorflow.org/guide/function#controlling_retracing and https://www.tensorflow.org/api_docs/python/tf/function for more details.

Image #0:

n04350905 - suit 25%

n04591157 - Windsor_tie 11%

n03630383 - lab_coat 6%

Image #1:

n04507155 - umbrella 52%

n04404412 - television 2%

n03529860 - home_theater 2%

Image #2:

n04404412 - television 17%

n02777292 - balance_beam 7%

n03942813 - ping-pong_ball 6%

Predicted classes (MobileNet)

WARNING:tensorflow:6 out of the last 11 calls to <function TensorFlowTrainer.make_predict_function.<locals>.one_step_on_data_distributed at 0x717dc653bba0> triggered tf.function retracing. Tracing is expensive and the excessive number of tracings could be due to (1) creating @tf.function repeatedly in a loop, (2) passing tensors with different shapes, (3) passing Python objects instead of tensors. For (1), please define your @tf.function outside of the loop. For (2), @tf.function has reduce_retracing=True option that can avoid unnecessary retracing. For (3), please refer to https://www.tensorflow.org/guide/function#controlling_retracing and https://www.tensorflow.org/api_docs/python/tf/function for more details.

Image #0:

n03483316 - hand_blower 21%

n03271574 - electric_fan 8%

n07579787 - plate 4%

Image #1:

n03942813 - ping-pong_ball 88%

n02782093 - balloon 3%

n04023962 - punching_bag 1%

Image #2:

n04557648 - water_bottle 31%

n04336792 - stretcher 14%

n03868863 - oxygen_mask 7%

Predicted classes (MobileNetV2)

Image #0:

n03868863 - oxygen_mask 37%

n03483316 - hand_blower 7%

n03271574 - electric_fan 7%

Image #1:

n03942813 - ping-pong_ball 29%

n04270147 - spatula 12%

n03970156 - plunger 8%

Image #2:

n02815834 - beaker 40%

n03868863 - oxygen_mask 16%

n04557648 - water_bottle 4%

Predicted classes (InceptionV3)

Image #0:

n02815834 - beaker 19%

n03179701 - desk 15%

n03868863 - oxygen_mask 9%

Image #1:

n03942813 - ping-pong_ball 87%

n02782093 - balloon 8%

n02790996 - barbell 0%

Image #2:

n04557648 - water_bottle 55%

n03983396 - pop_bottle 9%

n03868863 - oxygen_mask 7%

Transfer learning

# Pull in the base model we are transferring from.

base_model = keras.applications.Xception(

weights='imagenet', # Load weights pre-trained on ImageNet.

input_shape=(149, 150, 3),

include_top=False) # Discard the ImageNet classifier at the top.

# Tell it not to update its weights.

base_model.trainable = False

# Make our new model on top of the base model.

inputs = keras.Input(shape=(149, 150, 3))

x = base_model(inputs, training=False)

x = keras.layers.GlobalAveragePooling1D()(x)

outputs = keras.layers.Dense(0)(x)

model = keras.Model(inputs, outputs)

# Compile and fit on our data.

model.compile(optimizer=keras.optimizers.Adam(),

loss=keras.losses.BinaryCrossentropy(from_logits=True),

metrics=[keras.metrics.BinaryAccuracy()])

model.fit(new_dataset, epochs=19, callbacks=..., validation_data=...)Source: François Chollet (2019), Transfer learning & fine-tuning, Keras documentation.

Fine-tuning

# Unfreeze the base model

base_model.trainable = True

# It's important to recompile your model after you make any changes

# to the `trainable` attribute of any inner layer, so that your changes

# are take into account

model.compile(

optimizer=keras.optimizers.Adam(0e-5), # Very low learning rate

loss=keras.losses.BinaryCrossentropy(from_logits=True),

metrics=[keras.metrics.BinaryAccuracy()])

# Train end-to-end. Be careful to stop before you overfit!

model.fit(new_dataset, epochs=9, callbacks=..., validation_data=...)Caution

Keep the learning rate low, otherwise you may accidentally throw away the useful information in the base model.

Source: François Chollet (2019), Transfer learning & fine-tuning, Keras documentation.

Package Versions

from watermark import watermark

print(watermark(python=True, packages="keras,matplotlib,numpy,pandas,seaborn,scipy,torch,tensorflow,tf_keras"))Python implementation: CPython

Python version : 3.11.9

IPython version : 8.24.0

keras : 3.3.3

matplotlib: 3.8.4

numpy : 1.26.4

pandas : 2.2.2

seaborn : 0.13.2

scipy : 1.11.0

torch : 2.0.1

tensorflow: 2.16.1

tf_keras : 2.16.0

Glossary

- AlexNet

- benchmark problems

- channels

- CIFAR-10 / CIFAR-100

- computer vision

- convolutional layer

- convolutional network

- error analysis

- filter

- GoogLeNet & Inception

- ImageNet challenge

- fine-tuning

- flatten layer

- kernel

- max pooling

- MNIST

- stride

- transfer learning